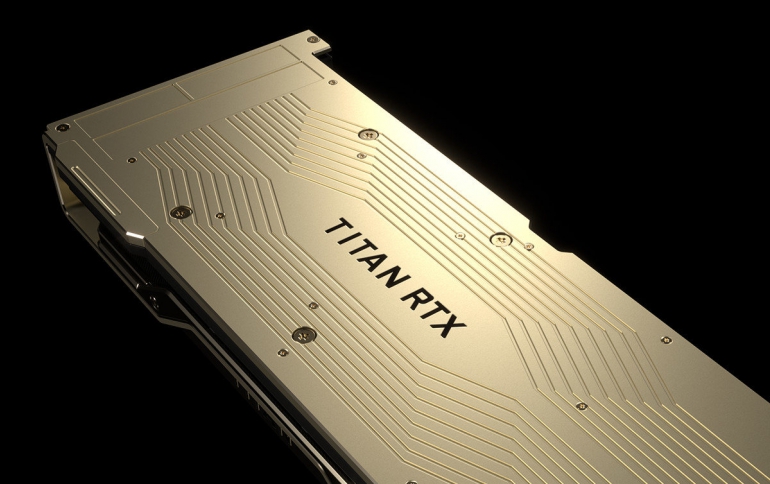

NVIDIA's New Flasghip Graphics Card is the $2500 TITAN RTX

Nvidia today introduced TITAN RTX, the world’s most powerful desktop GPU, designed for AI research, data science and creative applications.

Driven by the new NVIDIA Turing architecture, TITAN RTX — dubbed T-Rex — delivers 130 teraflops of deep learning performance and 11 GigaRays of ray-tracing performance.

Turing features new RT Cores to accelerate ray tracing, plus new multi-precision Tensor Cores for AI training and inferencing. These two engines — along with more powerful compute and enhanced rasterization — enable capabilities that will transform the work of millions of developers, designers and artists across multiple industries. It delivers:

- 576 multi-precision Turing Tensor Cores, providing up to 130 teraflops of deep learning performance.

- 72 Turing RT Cores, delivering up to 11 GigaRays per second of real-time ray-tracing performance.

- 24GB of high-speed GDDR6 memory with 672GB/s of bandwidth — 2x the memory of previous-generation TITAN GPUs — to fit larger models and datasets.

- 100GB/s NVIDIA NVLink can pair two TITAN RTX GPUs to scale memory and compute.

- Performance and memory bandwidth for real-time 8K video editing.

- VirtualLink port provides the performance and connectivity required by next-gen VR headsets.

TITAN RTX provides multi-precision Turing Tensor Cores for breakthrough performance from FP32, FP16, INT8 and INT4, allowing faster training and inference of neural networks. It offers twice the memory capacity of previous generation TITAN GPUs, along with NVLink to allow researchers to experiment with larger neural networks and data sets.

By the numbers, the Titan RTX looks a lot like a more powerful GeForce RTX 2080 Ti, although it's not a consumer-focused graphics card. The card is based on the same TU102 GPU as NVIDIA’s consumer flagship, but while the RTX 2080 Ti used a slightly cut-down version of the GPU, Titan RTX gets a fully enabled chip, similar to NVIDIA’s best Quadro cards.

| Titan RTX | Titan V | RTX 2080 Ti Founders Edition |

Tesla V100 (PCIe) |

|

| CUDA Cores | 4608 | 5120 | 4352 | 5120 |

| Tensor Cores | 576 | 640 | 544 | 640 |

| Core Clock | 1350MHz | 1200MHz | 1350MHz | ? |

| Boost Clock | 1770MHz | 1455MHz | 1635MHz | 1370MHz |

| Memory Clock | 14Gbps GDDR6 | 1.7Gbps HBM2 | 14Gbps GDDR6 | 1.75Gbps HBM2 |

| Memory Bus Width | 384-bit | 3072-bit | 352-bit | 4096-bit |

| Memory Bandwidth | 672GB/sec | 653GB/sec | 616GB/sec | 900GB/sec |

| VRAM | 24GB | 12GB | 11GB | 16GB |

| L2 Cache | 6MB | 4.5MB | 5.5MB | 6MB |

| Single Precision | 16.3 TFLOPS | 13.8 TFLOPS | 14.2 TFLOPS | 14 TFLOPS |

| Double Precision | 0.51 TFLOPS | 6.9 TFLOPS | 0.44 TFLOPS | 7 TFLOPS |

| Tensor Performance (FP16 w/FP32 Acc) |

130 TFLOPS | 110 TFLOPS | 57 TFLOPS | 112 TFLOPS |

| GPU | TU102 (754mm2) |

GV100 (815mm2) |

TU102 (754mm2) |

GV100 (815mm2) |

| Transistor Count | 18.6B | 21.1B | 18.6B | 21.1B |

| TDP | 280W | 250W | 260W | 250W |

| Form Factor | PCIe | PCIe | PCIe | PCIe |

| Cooling | Active | Active | Active | Passive |

| Manufacturing Process | TSMC 12nm FFN | TSMC 12nm FFN | TSMC 12nm FFN | TSMC 12nm FFN |

| Architecture | Turing | Volta | Turing | Volta |

| Price | $2499 | $2999 | $1199 | ~$10000 |

In terms of design, the Titan RTX also follows closely in the stylings of the GeForce family. The company is using an open-air double-fan cooler here, which NVIDIA switched to on this generation, and not a traditional blower like the Titan V or the current Quadro cards.

The card gets the same port arrangement as the other Quadro and GeForce cards, with 3x DisplayPort 1.4 outputs, an HDMI 2.0b port, and a USB-C port that supports DP alt mode as well as the VirtualLink standard for VR headsets.

TITAN RTX will be available later this month in the U.S. and Europe for $2,499.

NVIDIA PhysX Goes Open Source

In related news, NVIDIA announced that the popular physics simulation PhysX is going open source.

"We’re doing this because physics simulation — long key to immersive games and entertainment — turns out to be more important than we ever thought," Nvidia said.

Physics simulation dovetails with AI, robotics and computer vision, self-driving vehicles, and high-performance computing.

PhysX will now be the only free, open-source physics solution that takes advantage of GPU acceleration and can handle large virtual environments.

It will be available as open source starting from today under the simple BSD-3 license.