U.S Lawmakers Push Facebook and Twitter to Make Rules Clearer

In the congressional hearings on misinformation and election interference, Facebook and Twitter committed to trying to get better at explaining how their automated systems sort and block content on their social networks, and how they spot material that needs to be removed.

Facebook Chief Operating Officer Sheryl Sandberg and Twitter Chief Executive Officer Jack Dorsey are under pressure to be more open, improve transparency and explain how they plan to solve the problems such as abuse, misinformation and manipulation.

The hearings in the House and the Senate touched on some of the deepest tech policy issues, and allowed executives to explain in depth the difficulties of managing their networks, and where and how they have failed to do so.

Hopefully, the hearings did not devolve apologies from the executives for trying to police their networks in ways that affected certain political viewpoints.

Both Mr. Dorsey's and Ms. Sandberg's testimony highlighed the complexities of their systems and helped underscore the difficulties lawmakers will face in figuring out ways to ensure that tech companies are guarding people's political freedoms and privacy while protecting their services from those who would spread misinformation and propaganda.

"There's a lot of confusion around our rules and around our enforcement," Dorsey said during solo testimony at an afternoon hearing in the House focused on content decisions. "We intend to fix it."

Both Facebook and Twitter are platforms that could be considered as open forums for debate and free expression, but still they both remain businesses, meaning thy have to make money out of ad-targeting and data-collection practices.

On Wednesday, members of the Senate Intelligence Committee asked, for example, why the companies can't let users know when they're talking to a bot as opposed to a human, as a way to expose foreign activity.

Senators asked questions on how the algorithms work, and why some content gets banned while other posts stay up. In the Senate and House, lawmakers also sought insight into the number of human moderators the companies use, their processes for collaborating with each other or law enforcement, and definitions of abuse or fairness.

Facebook's Sandberg said that the company has invested in hiring thousands of workers to improve its security procedures, including its content policies. When questioned about some of the worst content Facebook has hosted, Sandberg agreed with the senators that she was disappointed to find it on the social network. Ads that discourage people from voting have "no place on Facebook," she said, nor does discriminatory advertising, even though Facebook's systems can't guarantee they won't appear.

Twitter's Dorsey said that his company was engaged in a grand restructuring with uncertain next steps for tackling a series of problems.

It would be difficult, Dorsey said, to reliably label bots because it's possible to program an account to act like a human. Twitter sometimes fails to sufficiently prioritize the take-down of violent posts or tweets selling drugs, he added,. It also needs to put less of a burden on the victims of hate and harassment to report abuse, he said, and all of the companies should have regular check-ins to share tips about foreign interference.

Dorsey was also open to discuss measures that would limit user-generated content and ads related to drug dealing or sex trafficking.

The Justice Department issued a statement saying it is convening meetings with several state attorneys general "to discuss a growing concern that these companies may be hurting competition and intentionally stifling the free exchange of ideas on their platforms."

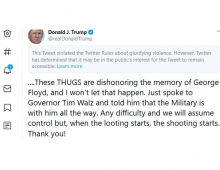

U.S. President Donald Trump has been suggesting the government could take vague action over what he claims is censorship of conservatives by social-media companies. Both Dorsey and Sandberg reject the contention.

When Dorsey was asked how many people he employs to moderate content, he said, "we don't want to think of this in terms of people." He said he would get back to the committee with more information. Technological jargon and talk from Dorsey about the hundreds of "signals" that contribute to content decisions also occasionally frustrated some members of Congress.

Google was absent from the hearing. The Senate panel had invited Larry Page, CEO of parent company Alphabet Inc., but Google tried to send its chief legal officer, whom the committee rejected as insufficiently high-level.