NVIDIA Adds Liquid-Cooled GPUs for Sustainable, Efficient Computing

In the worldwide effort to halt climate change, Zac Smith is part of a growing movement to build data centers that deliver both high performance and energy efficiency.

He’s head of edge infrastructure at Equinix, a global service provider that manages more than 240 data centers and is committed to becoming the first in its sector to be climate neutral.

“We have 10,000 customers counting on us for help with this journey. They demand more data and more intelligence, often with AI, and they want it in a sustainable way,” said Smith, a Julliard grad who got into tech in the early 2000’s building websites for fellow musicians in New York City.

Marking Progress in Efficiency

As of April, Equinix has issued $4.9 billion in green bonds. They’re investment-grade instruments Equinix will apply to reducing environmental impact through optimizing power usage effectiveness (PUE), an industry metric of how much of the energy a data center uses goes directly to computing tasks.

Data center operators are trying to shave that ratio ever closer to the ideal of 1.0 PUE. Equinix facilities have an average 1.48 PUE today with its best new data centers hitting less than 1.2.

Equinix is making steady progress in the energy efficiency of its data centers as measured by PUE (inset).In another step forward, Equinix opened in January a dedicated facility to pursue advances in energy efficiency. One part of that work focuses on liquid cooling.

Born in the mainframe era, liquid cooling is maturing in the age of AI. It’s now widely used inside the world’s fastest supercomputers in a modern form called direct-chip cooling.

Liquid cooling is the next step in accelerated computing for NVIDIA GPUs that already deliver up to 20x better energy efficiency on AI inference and high performance computing jobs than CPUs.

Efficiency Through Acceleration

If you switched all the CPU-only servers running AI and HPC worldwide to GPU-accelerated systems, you could save a whopping 11 trillion watt-hours of energy a year. That’s like saving the energy more than 1.5 million homes consume in a year.

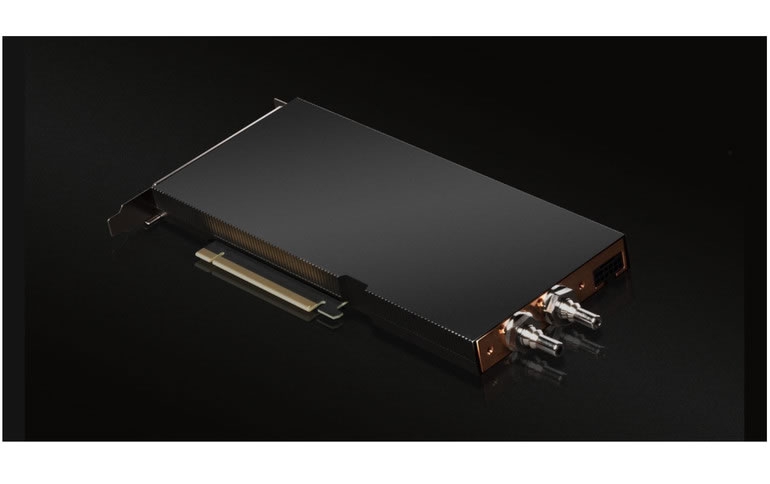

Today, NVIDIA adds to its sustainability efforts with the release of our first data center PCIe GPU using direct-chip cooling.

Equinix is qualifying the A100 80GB PCIe Liquid-Cooled GPU for use in its data centers as part of a comprehensive approach to sustainable cooling and heat capture. The GPUs are sampling now and will be generally available this summer.

Saving Water and Power

“This marks the first liquid-cooled GPU introduced to our lab, and that’s exciting for us because our customers are hungry for sustainable ways to harness AI,” said Smith.

Data center operators aim to eliminate chillers that evaporate millions of gallons a water a year to cool the air inside data centers. Liquid cooling promises systems that recycle small amounts of fluids in closed systems focused on key hot spots.

“We’ll turn a waste into an asset,” he said.

Same Performance, Less Power

In separate tests, both Equinix and NVIDIA found a data center using liquid cooling could run the same workloads as an air-cooled facility while using about 30 percent less energy. NVIDIA estimates the liquid-cooled data center could hit 1.15 PUE, far below 1.6 for its air-cooled cousin.

Liquid-cooled data centers can pack twice as much computing into the same space, too. That’s because the A100 GPUs use just one PCIe slot; air-cooled A100 GPUs fill two.

NVIDIA sees power savings, density gains with liquid cooling.At least a dozen system makers plan to incorporate these GPUs into their offerings later this year. They include ASUS, ASRock Rack, Foxconn Industrial Internet, GIGABYTE, H3C, Inspur, Inventec, Nettrix, QCT, Supermicro, Wiwynn and xFusion

A Global Trend

Regulations setting energy-efficiency standards are pending in Asia, Europe and the U.S. That’s motivating banks and other large data center operators to evaluate liquid cooling, too.

And the technology isn’t limited to data centers. Cars and other systems need it to cool high-performance systems embedded inside confined spaces.

The Road to Sustainability

“This is the start of a journey,” said Smith of the debut of liquid-cooled mainstream accelerators.

Indeed, we plan to follow up the A100 PCIe card with a version next year using the H100 Tensor Core GPU based on the NVIDIA Hopper architecture. We plan to support liquid cooling in our high-performance data center GPUs and our NVIDIA HGX platforms for the foreseeable future.

For fast adoption, today’s liquid-cooled GPUs deliver the same performance for less energy. In the future, we expect these cards will provide an option of getting more performance for the same energy, something users say they want.

“Measuring wattage alone is not relevant, the performance you get for the carbon impact you have is what we need to drive toward,” said Smith.

Learn more about our new A100 PCIe liquid-cooled GPUs.