Intel Pushes For Widespread Deployment of Optane DC Persistent Memory

Intel today launched its beta program for Intel Optane DC persistent memory, offering early access to the new memory technology set for general availability in the first half of 2019.

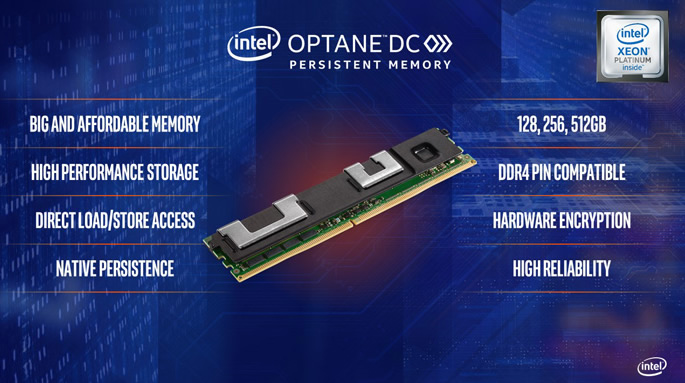

Intel Optane DC persistent memory represents a new class of memory architected to extract further value from data. Intel claims that unlike traditional DRAM, Intel Optane DC persistent memory will offer the combination of high-capacity, affordability and persistence. Systems and services deploying this new memory technology will deliver new advancements for a wide range of data center use cases, including improved analytics, database and in-memory database, artificial intelligence, high-capacity virtual machines and containers, and content delivery networks. The technology fundamentally changes data center resiliency, taking in-memory database restart times from days or hours down to minutes or seconds. You can load your databases into persistent memory once and eliminate the bottleneck of reloading the data into memory each time the server boots. This means real time savings – think of having a server ready to go in two minutes instead of 20. Persistent memory also lowers latency by allowing access to stored data on the memory bus rather than going across the I/O bus.

With Intel’s persistent memory modules, supporting up to 512GB, two-socket servers can support 6TB of persistent memory and 1.5TB of regular DDR4 memory. Persistent memory can be used for storage (like a SSD in application direct mode) or as RAM for applications. This means a two-socket server can deliver big memory benefits to existing OS and applications.

The Hardware Beta Program is available to original equipment manufacturers (OEMs) and cloud service providers (CSPs) including Microsoft, Cisco, Alibaba, Dell, Fujitsu, Google Cloud, Hewlett Packard Enterprise, Lenovo, Oracle and Tencent. Intel is also working with independent software vendors (ISVs) to ensure applications and operating environments take full advantage of the technology.

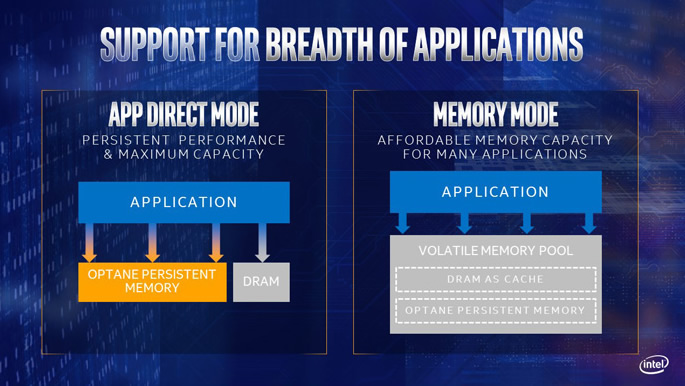

Intel also announced new capabilities delivered by Intel Optane DC persistent memory through two special operating modes – App Direct mode and Memory mode. The modes determine which capabilities of the Intel memory are active and available to software.

Applications that have been specifically tuned can take advantage of App Direct mode to receive the full value of the product’s native persistence and larger capacity. In Memory mode, applications running in a supported operating system or virtual environment can use the product as volatile memory, taking advantage of the additional system capacity made possible from module sizes up to 512 GB without needing to rewrite software.

To fully understand the modes, have in mind that servers will be populated with a combination of DRAM and Intel Optane persistent memory. DRAM has the lowest memory latency. Intel Optane persistent memory has slightly higher latency, but offers affordable capacity and data persistence.

When configured for Memory Mode, the applications and operating system perceives a pool of volatile memory, no differently than it does today on DRAM-only systems. In this mode, no specific persistent memory programming is required in the applications, and the data will not be saved in the event of a power loss. In Memory Mode, the DRAM acts as a cache for the most frequently-accessed data, while the Optane persistent memory provides large memory capacity. Cache management operations are handled by the Xeon Scalable processor’s memory controller. When data is requested from memory, the memory controller first checks the DRAM cache, and if the data is present, the response latency is identical to DRAM. If the data is not in the DRAM cache, it is read from the Optane persistent memory with slightly longer latency. Applications with consistent data retrieval patterns the memory controller can predict will have a higher cache hit-rate, and should see performance close to all-DRAM configurations, while workloads with highly-random data access over a wide address range may see some performance difference versus DRAM alone. Also, data is volatile in Memory Mode; it will not be saved in the event of power loss. Persistence is enabled in the second mode, called App Direct.

Memory Mode brings large memory capacity at affordable cost points to legacy applications. Virtualized database deployments and big-data analytics applications are candidates for Memory Mode.

Configured in App Direct Mode, the applications and operating system are explicitly aware there are two types of direct load/store memory in the platform, and can direct which type of data read or write is suitable for DRAM or Optane persistent memory. Operations that require the lowest latency and don’t need permanent data storage can be executed on DRAM, such as database "scratch pads." Data that needs to be made persistent or structures that are very large can be routed to the Optane persistent memory. So if you want to make data persistent in memory, you must use App Direct Mode.

In-memory databases, in-memory analytics frameworks and ultrafast storage applications are good examples of workloads that greatly benefit from using App Direct Mode.

App Direct mode requires an operating system or virtualization environment enabled with a persistent memory-aware file system, including Microsoft Windows Server 2019 and a future update release of VMware ESX v6.7. Intel is also engaged with the Linux community, Linux distributors also plan to support Optane persistent memory.

The modes determine how much memory capacity the OS will register as present in the platform. In App Direct Mode, the DRAM and the Optane persistent memory are both counted in the total platform memory. In Memory Mode, the DRAM is used as a cache, and does not appears as an independent memory resource, so it is not included in the total memory perceived by the OS. For example, a platform with 1.536 TB of Optane persistent memory and 192 GB of DRAM would register with the OS as 1.728 TB of total memory in App Direct, but only appear as 1.536 TB in Memory Mode.

Erin Chapple, Corporate VP of Windows Server at Microsoft, today shared the advancements Microsoft has driven to Windows Server '19 and SQL Server thanks a joint collaboration with intel. This included a new performance record for Microsoft HyperV and Storage Spaces Direct demonstrated at Microsoft Ignite of 13.7 million IOPS, more than doubling the record set just last year.

VMware CTO of Server Platform Technologies, Rich Brunner, joined Intel's Lisa Spelman to discuss VMware’s optimization of VSphere and to demonstrate VM capacity scaling to 122 VMs running on a 2 socket platform supported by 6 TB of system memory delivered by Intel Optane DC Persistent Memory.