IBM Claims Record Deep Learning Performance

IBM Research has reported an algorithmic breakthrough for deep learning that comes close to achieving the holy grail of ideal scaling efficiency

IBM's new distributed deep-learning (DDL) software enables a nearly linear speedup with each added processor. The development is intended to achieve similar speedups for each server added to IBM's DDL algorithm.

The company published in arXiv close to ideal scaling with new distributed deep learning software which achieved record communication overhead and 95% scaling efficiency on the Caffe deep learning framework over 256 NVIDIA GPUs in 64 IBM Power systems.

Previous best scaling was demonstrated by Facebook AI Research of 89% for a training run on Caffe2, at higher communication overhead. IBM Research also beat Facebook's time by training the model in 50 minutes, versus the 1 hour Facebook took. Using this software, IBM Research achieved a new image recognition accuracy of 33.8% for a neural network trained on a very large data set (7.5M images). The previous record published by Microsoft demonstrated 29.8% accuracy.

A technical preview of this IBM Research Distributed Deep Learning code is available today in IBM PowerAI 4.0 distribution for TensorFlow and Caffe.

Deep learning is a widely used AI method to help computers understand and extract meaning from images and sounds through which humans experience much of the world. It holds promise to fuel breakthroughs in everything from consumer mobile app experiences to medical imaging diagnostics. But progress in accuracy and the practicality of deploying deep learning at scale is gated by technical challenges, such as the need to run massive and complex deep learning based AI models - a process for which training times are measured in days and weeks.

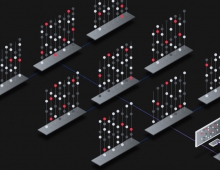

IBM Research has been focused on reducing these training times for large models with large data sets. The objective is to reduce the wait-time associated with deep learning training from days or hours to minutes or seconds, and enable improved accuracy of these AI models. To achieve this, IBM's reseachers are tackling grand-challenge scale issues in distributing deep learning across large numbers of servers and NVIDIA GPUs.

Most popular deep learning frameworks scale to multiple GPUs in a server, but not to multiple servers with GPUs. Specifically, IBM's team (Minsik Cho, Uli Finkler, David Kung, Sameer Kumar, David Kung, Vaibhav Saxena, Dheeraj Sreedhar) wrote software and algorithms that automate and optimize the parallelization of this very large and complex computing task across hundreds of GPU accelerators attached to dozens of servers.

The software does deep learning training fully synchronously with very low communication overhead. As a result, when the researchers scaled to a large cluster with 100s of NVIDIA GPUs, it yielded record image recognition accuracy of 33.8% on 7.5M images from the ImageNet-22k dataset vs the previous best published result of 29.8% by Microsoft. A 4% increase in accuracy is a big leap forward; typical improvements in the past have been less than 1%. IBM's distributed deep learning (DDL) approach enabled the researchers to not just improve accuracy, but also to train a ResNet-101 neural network model in just 7 hours, by leveraging the power of 10s of servers, equipped with 100s of NVIDIA GPUs; Microsoft took 10 days to train the same model. This achievement required we create the DDL code and algorithms to overcome issues inherent to scaling these otherwise powerful deep learning frameworks.

The company is making its DDL suite available free to any PowerAI platform user.