Researchers Use Analog AI hardware to Support Deep Learning Inference Without Great Accuracy

IBM's research team in Zurich have started developing a groundbreaking technique that achieves both energy efficiency and high accuracy on deep neural network computations using phase-change memory devices.

They believe this could be a way forward in advancing AI hardware-accelerator architectures.

Deep neural networks (DNNs) are revolutionizing the field of artificial intelligence as they continue to achieve unprecedented success in cognitive tasks such as image and speech recognition. However, running DNNs on current von Neuman computing architectures limits the achievable performance and energy efficiency. As power efficiency and performance should not be compromised, new hardware architecture is needed to optimized deep neural network inference.

For obvious reasons, Internet giants with server farms would ideally prefer to keep running such deep learning algorithms on the existing von Neuman infrastructure. At the end of the day, what’s adding on a few more servers to get the job done? This may work for a while, but server farms consume an enormous amount of energy. As deep learning continues to evolve and demand greater processing power, companies with large data centers will quickly realize that building more power plants to support an additional one million times the operations needed to run categorizations of a single image, for example, is just not economical, nor sustainable.

Many companies are currently turning to the Cloud as a solution. Indeed, cloud computing has favorable capabilities, including faster processing which helps improve the performance of deep learning algorithms. But cloud computing has its shortcomings too. There are data privacy issues, potential response delays associated with the transmission of the data to the cloud and back, continual service costs, and in some areas of the world, slow internet connectivity.

And the problem goes well beyond data centers. Think drones, robots, mobile device and the like. Or consumer products, such as smart cameras, augmented reality goggles and devices. Clearly, we need to take the efficiency route going forward by optimizing microchips and hardware to get such devices running on fewer watts.

While there has been significant progress in the development of hardware-accelerator architectures for inference, many of the existing set-ups physically split the memory and processing units. This means that DNN models are typically stored in off-chip memory, and that computational tasks require a constant shuffling of data between the memory and computing units – a process that slows down computation and limits the maximum achievable energy efficiency.

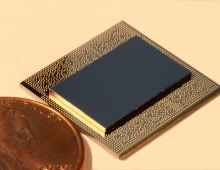

IBM's research, featured in Nature Communications, exploits in-memory computing methods using resistance-based (memristive) storage devices as a promising non-von Neumann approach for developing hardware that can efficiently support DNN inference models. Specifically, the researchers propose an architecture based on phase-change memory (PCM) that, like the human brain, has no separate compartments to store and compute data, and therefore consumes significantly less energy.

The challenge in using PCM devices, however, is achieving and maintaining computational accuracy. As PCM technology is analog in nature, computational precision is limited due to device variability as well as read and write conductance noise. To overcome this, the researchers needed to find a way to train the neural networks so that transferring the digitally trained weights to the analog resistive memory devices would not result in significant loss of accuracy.

Their approach was to explore injecting noise to the synaptic weights during the training of DNNs in software as a generic method to improve the network resilience against analog in-memory computing hardware non-idealities. Their assumption was that injecting noise comparable to the device noise during the training of DNNs would improve the robustness of the models.

It turned out that the assumption was correct – training ResNet-type networks this way resulted in no considerable accuracy loss when transferring weights to PCM devices. The researchers achieved an accuracy of 93.7% on the CIFAR-10 dataset and a top-1 accuracy on the ImageNet benchmark of 71.6% after mapping the trained weights to analog PCM synapses. And after programing the trained weights of ResNet-32 on 723,444 PCM devices of a prototype chip, the accuracy computed from the measured hardware weights stayed above 92.6% over a period of 1 day.

In order to further improve accuracy, the researchers developed an online compensation technique that exploits the batch normalization parameters to periodically correct the activation distributions during inference. This allowed them to improve the one-day CIFAR-10 accuracy retention up to 93.5% on hardware.

In parallel, the team also experimented with training DNN models using analog PCM synapses. Although training is a much more difficult problem to tackle than inference, using an innovative mixed-precision architecture, the researchers were able to achieve software-equivalent accuracies on several types of small-scale DNNs, including multilayer perceptrons, convolutional neural networks, long-short-term-memory networks, and generative adversarial networks. This research was recently published in the peer-reviewed journal Frontiers In Neuroscience.

In an era transitioning more and more towards AI-based technologies, including internet-of-things battery-powered devices and autonomous vehicles, such technologies would highly benefit from fast, low-powered, and reliably accurate DNN inference engines.