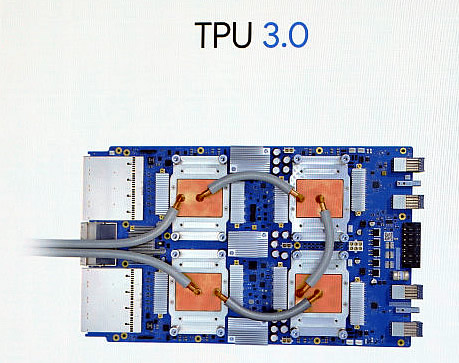

Google's Third Generation of Tensor Processing Unit Offers 100 Petaflops in Performance

Google announced at Google I/O 2018 that is rolling out its third generation of silicon, the Tensor Processor Unit 3.0.

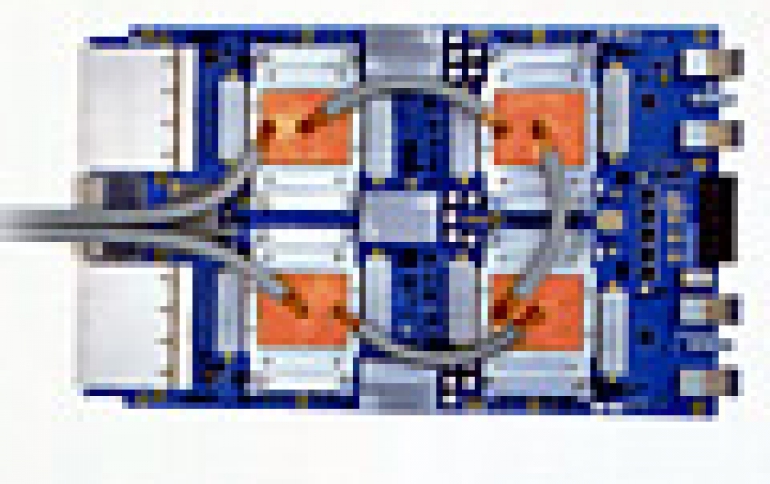

Google CEO Sundar Pichai said the new TPU is eight times more powerful than last year per pod, with up to 100 petaflops in performance. It is optimized for Google's TensorFlow framework for developing machine learning tools. Google said that the new accelerator will be packaged into machine learning supercomputers called TPU Pods. These systems contain 64 third-generation chips.

More power increases the cooling requirements. Google said this is the first time the company has had to include liquid cooling in its data centers. Heat dissipation is increasingly a difficult problem for companies looking to create customized hardware for machine learning.

Amazon and Facebook are also working on their own custom silicon solutions. Facebook's hardware is optimized for its Caffe2 framework. Amazon also wants to own the cloud infrastructure ecosystem with AWS.

Intel has been also beating the drum on FPGA as well, which is designed to be more modular and flexible as the needs for machine learning change over time.

Microsoft is also betting on FPGA, and unveiled what it's calling Brainwave yesterday at its BUILD conference for its Azure cloud platform.