Facebook Puts Warning Labels on Millions of Misleading Covid-19 Posts

Facebook has added warnings to 40 million pieces of misinformation about the coronavirus on its main social network in March, part of an effort to stem the spread of bad advice and misleading articles.

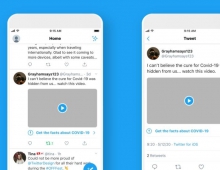

Once a piece of content is rated false by Facebook's fact-checkers, the company reduces its distribution and shows warning labels with more context. Based on one fact-check, Facebook is able to kick off similarity detection methods that identify duplicates of debunked stories. During the month of March, Facebook says it displayed warnings on about 40 million posts related to COVID-19 on Facebook, based on around 4,000 articles by the company's independent fact-checking partners. When people saw those warning labels, 95% of the time they did not go on to view the original content. To date, Facebook has also removed hundreds of thousands of pieces of misinformation that could lead to imminent physical harm. Examples of misinformation we’ve removed include harmful claims like drinking bleach cures the virus and theories like physical distancing is ineffective in preventing the disease from spreading.

In the next few weeks, users who liked, commented or reacted to misleading posts that were later taken down will be shown messages in their news feeds linking to factual information about Covid-19, the company said.

These messages will connect people to COVID-19 myths debunked by the WHO including ones Facebook has removed from its platform for leading to imminent physical harm. People will start seeing these messages in the coming weeks.

To make it easier for people to find accurate information about COVID-19, Facebook recently added a new section to our COVID-19 Information Center called Get the Facts. It includes fact-checked articles from Facebook's partners that debunk misinformation about the coronavirus. The fact-check articles are selected by Facebook's News curation team and updated every week. This is now available in the US. Facebook will soon add it to Facebook News in the US as well.