Facebook to Open-source Artificial Intelligence Server Design

Facebook is releasing the hardware design for a server it uses to train artificial intelligence (A.I.) software, allowing other companies exploring A.I. to build similar systems. The 'Big Sur' server is a GPU-based system for training neural networks. It can be used to run machine learning programs, a type of A.I. software that "learns" and gets better at tasks over time.

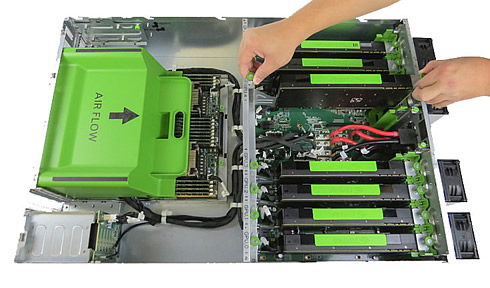

Big Sur is an Open Rack-compatible hardware designed for AI computing at a large scale. The system incorporates eight high-performance GPUs of up to 300 watts each, with the flexibility to configure between multiple PCI-e topologies. Leveraging NVIDIA's Tesla Accelerated Computing Platform, Big Sur is twice as fast as Facebook's previous generation server. And distributing training across eight GPUs allows Facebook to scale the size and speed of their networks by another factor of two.

In addition to the improved performance, Facebook says that Big Sur is more versatile and efficient than the off-the-shelf solutions in the company's previous generation. While many high-performance computing systems require special cooling and other unique infrastructure to operate, these new servers have been optimized for thermal and power efficiency, allowing Facebook to operate them even in their own free-air cooled, Open Compute standard data centers. Big Sur was built with the NVIDIA Tesla M40 in mind but is qualified to support a wide range of PCI-e cards.

In addition, Facebook removed the components that don't get used very much, and components that fail relatively frequently - such as hard drives and DIMMs - can now be removed and replaced in a few seconds. Touch points for technicians are all Pantone 375 C green, the same touch-point color as all of Facebook’s custom data center hardware, which allows technicians to identify, access and remove parts.

Facebook plans to open-source Big Sur and will submit the design materials to the Open Compute Project (OCP). Facebook didn't say when it would release the specifications for Big Sur.

Google is also rolling out machine learning across more of its services.