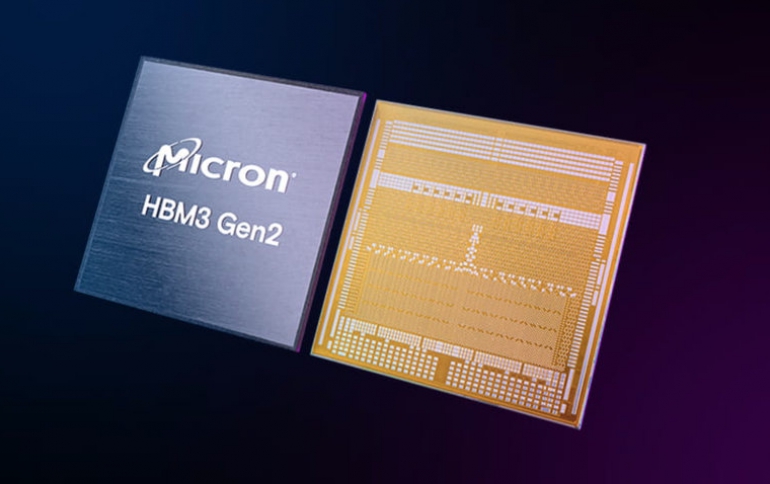

Crucial announces HBM3 Gen2 memory

Crucial today revealed HBM3 Gen2 memory that according is the Industry’s fastest, highest-capacity HBM to advance generative AI innovation

Generative AI

Generative AI opens a world for new forms of creativity and expression, like the image above, by using large language model (LLM) for training and inference. Utilization of compute and memory resources make the difference in time to deploy and response time. Micron HBM3 Gen2 provides higher memory capacity that improves performance and reduces CPU offload for faster training and more responsive queries when inferencing LLMs such as ChatGPT™.

Deep learning

AI unlocks new possibilities for businesses, IT, engineering, science, medicine and more. As larger AI models are deployed to accelerate deep learning, maintaining compute and memory efficiency is important to address performance, costs and power to ensure benefits for all. Micron HBM3 Gen2 improves memory performance while focusing on energy efficiency that increases performance per watt resulting in lower time to train LLMs such as GPT-4 and beyond.

High-performance computing (HPC)

Scientists, researchers, and engineers are challenged to discover solutions for climate modeling, curing cancer and renewable and sustainable energy resources. High-performance computing (HPC) propels time to discovery by executing very complex algorithms and advanced simulations that use large datasets. Micron HBM3 Gen2 provides higher memory capacity and improves performance by reducing the need to distribute data across multiple nodes, accelerating the pace of innovation.

Micron's HBM3 Gen2

HBM3 Gen2 built for AI and supercomputing with industry-leading process technology

Micron extends industry-leading performance across our data center product portfolio with HBM3 Gen2. Delivering faster data rates, improved thermal response, and 50% higher monolithic die density within same package footprint as previous generation.

greater than 1.2TB/S memory bandwidth

HBM3 Gen2 provides the memory bandwidth to fuel AI compute cores

With advanced CMOS innovations and industry leading 1β process technology, Micron HBM3 Gen2 provides higher memory bandwidth that exceeds 1.2TB/s.

50% more capacity

HBM3 Gen2 unlocks the world of generative AI

With 50% more memory capacity2 per 8-high 24GB cube, HBM3 Gen2 enables training at higher precision and accuracy.

greater than 2.5x improvement in performance/watt

HBM3 Gen2 delivers increased performance per watt for AI and HPC workloads

Micron designed an energy efficient data path that reduces thermal impedance, enables greater than 2.5x improvement in performance/watt3 compared to previous generation.

greater than 50% more queries/day

HBM3 Gen2 pioneers training of multimodal, multitrillion parameter AI models

With increased memory bandwidth that improves system-level performance, HBM3 Gen2 reduces training time by more than 30%4 and allows >50% more queries per day.