Intel Outlines 'Data-Centric' Strategy, Shows off Xeon Roadmap

Today at Intel's Data-Centric Innovation Summit, Navin Shenoy, executive vice president and general manager of the Data Center Group at Intel, shared the company's strategy for the future of data-centric computing, as well as a view of Intel's total addressable market (TAM), and new details about server product roadmap.

Analysts forecast that by 2025 data will exponentially grow by 10 times and reach 163 zettabytes. But we have a long way to go in harnessing the power of this data.

The life-saving potential of autonomous driving is profound - many lives globally could be saved as a result of fewer accidents. Achieving this, however, requires a combination of technologies working in concert - everything including computer vision, edge computing, mapping, the cloud and artificial intelligence (AI).

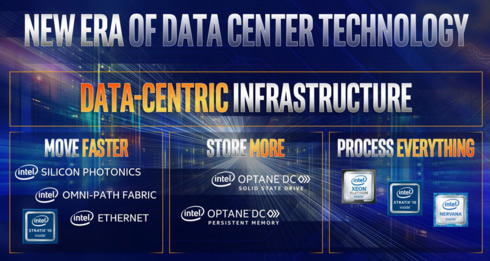

This, in turn, requires a significant shift in the way the industry views computing and data-centric technology. "We need to look at data holistically, including how we move data faster, store more of it and process everything from the cloud to the edge" Shenoy said.

This end-to-end approach is core to Intel's strategy - helping customers move, store and process data. In fact, Intel has revised its TAM from $160 billion in 2021 to $200 billion in 2022 for our data-centric businesses.

Connectivity and the network have become the bottlenecks to more effectively utilize and unleash high-performance computing. Intel says that silicon photonics technologies are designed to break those boundaries using the ability to integrate the laser in silicon and, ultimately, deliver the lowest cost and power per bit and the highest bandwidth.

In addition, Intel's Alexis Bjorlin announced today that the company is further expanding its connectivity portfolio with a new SmartNIC product line - code-named Cascade Glacier - which is based on Intel Arria 10 FPGAs and enables optimized performance for Intel Xeon processor-based systems. Intel's customers are sampling today and Cascade Glacier will be available in 2019's first quarter.

Intel recently unveiled more details about Intel Optane DC persistent memory, a new class of memory and storage that enables a large persistent memory tier between DRAM and SSDs, while being fast and affordable. And today, Intel shared new performance metrics that show that Intel Optane DC persistent memory-based systems can achieve up to 8 times the performance gains for certain analytics queries over configurations that rely on DRAM only.

Intel says that Google, CERN, Huawei, SAP and Tencent already see this as a game-changer. And today, Intel started to ship the first units of Optane DC persistent memory to Google. Broad availability is planned for 2019, with the next generation of Intel Xeon processors.

In addition, at the Flash Memory Summit, Intel unveiled new Intel QLC 3D NAND-based products.

Intel's investments in optimizing Intel Xeon processors and Intel FPGAs for artificial intelligence are also paying off. In 2017, more than $1 billion in revenue came from Intel 's customers running AI on Intel Xeon processors in the data center. And the company continues to improve AI training and inference performance. In total, since 2014, Intel says its performance has improved well over 200 times.

Intel also today, we disclosed the next generation roadmap for the Intel Xeon platform:

- Cascade Lake is a future Intel Xeon Scalable processor based on 14nm technology that will introduce Intel Optane DC persistent memory and a set of new AI features called Intel DL Boost. This embedded AI accelerator will speed deep learning inference workloads, with an expected 11 times faster image recognition than the current generation Intel Xeon Scalable processors when they launched in July 2017. Cascade Lake is targeted to begin shipping late this year.

- Cooper Lake is a future Intel Xeon Scalable processor that is based on 14nm technology. Cooper Lake will introduce a new generation platform with significant performance improvements, new I/O features, new Intel DL Boost capabilities (Bfloat16) that improve AI/deep learning training performance, and additional Intel Optane DC persistent memory innovations. Cooper Lake is targeted for 2019 shipments.

- Ice Lake is a future Intel Xeon Scalable processor based on 10nm technology that shares a common platform with Cooper Lake and is planned as a fast follow-on targeted for 2020 shipments.