Google Lens Coming to Camera Apps, Maps Become More Personal

Google is using Artificial Intelligence to help you understand ad interact with the physical world though Google Maps and Google Lens.

Maps can now tell you if the business you're looking for is open, how busy it is, and whether parking is easy to find before you arrive. Lens lets you just point your camera and get answers about everything from that building in front of you ... to the concert poster you passed ... to that lamp you liked in the store window.

Google Lens coming to smartphones

Last year, Google introduced Lens in Google Photos and the Assistant. People are already using it to answer questions - especially when they're difficult to describe in a search box, like "what type of dog is that?" or "what's that building called?"

Today at Google I/O, Google announced that Lens will now be available directly in the camera app on supported devices from LGE, Motorola, Xiaomi, Sony Mobile, HMD/Nokia, Transsion, TCL, OnePlus, BQ, Asus, and of course the Google Pixel. The company also announced three updates that enable Lens to answer more questions, about more things, more quickly:

- First, Smart text selection connects the words you see with the answers and actions you need. You can copy and paste text from the real world-like recipes, gift card codes, or Wi-Fi passwords-to your phone. Lens helps you make sense of a page of words by showing you relevant information and photos. Say you're at a restaurant and see the name of a dish you don't recognize-Lens will show you a picture to give you a better idea. This requires not just recognizing shapes of letters, but also the meaning and context behind the words.

- Second, sometimes your question is not, "what is that exact thing?" but instead, "what are things like it?" Now, with style match, if an outfit or home decor item catch your eye, you can open Lens and not only get info on that specific item-like reviews-but see things in a similar style that fit the look you like.

- Third, Lens now works in real time. It's able to proactively surface information instantly - and anchor it to the things you see. Now you'll be able to browse the world around you, just by pointing your camera. This is only possible with machine learning, using both on-device intelligence and cloud TPUs, to identify billions of words, phrases, places, and things in a split second.

Explore and eat your way around town with Google Maps

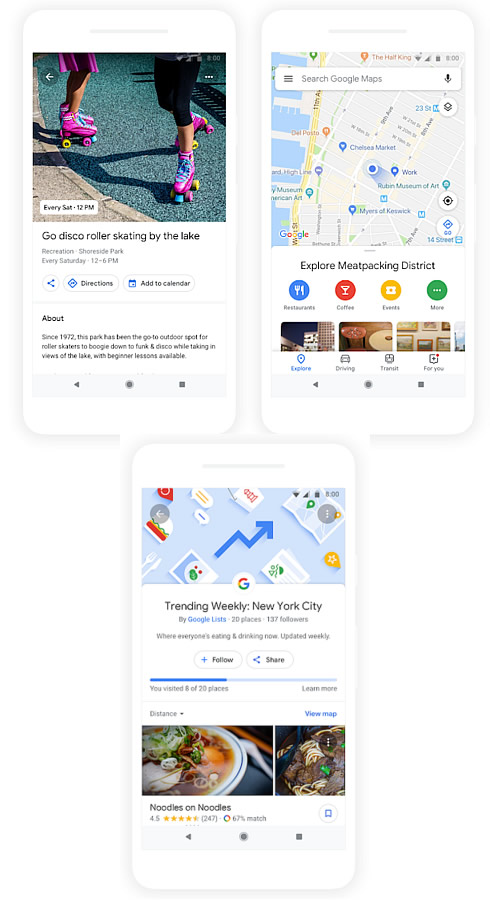

In the coming months, Google Maps will become more assistive and personal with new features that help you figure out what to eat, drink, and do-no matter what part of the world you're in.

The redesigned Explore tab will be your hub for everything new and interesting nearby. When you check out a particular area on the map, you'll see dining, event, and activity options based on the area you're looking at. Top trending lists like the Foodie List show you where the tastemakers are going, and help you find new restaurants based on information from local experts, Google's algorithms, and trusted publishers like The Infatuation and others.

Google will even help you track your progress against each list, so if you've crossed four of the top restaurants in the Meatpacking District off your list, you'll know that you have six more to try.

Tapping on any food or drink venue will display your "match"-a number that suggests how likely you are to enjoy a place and reasons explaining why. Google uses machine learning to generate this number, based on a few factors: what Google knows about a business, the food and drink preferences you've selected in Google Maps, places you've been to, and whether you've rated a restaurant or added it to a list. Your matches change as your own tastes and preferences evolve over time.

When you need to corral a group for a meal or activity, there's a new feature that makes it easier to coordinate. Long press on the places you're interested in to add it to a shareable shortlist that your friends and family can add more places to and vote on. Once you've made a decision together, you can use Google Maps to book a reservation and find a ride.

The new "For you" tab allows you to stay on top of the latest and greatest happening in the areas you're into. You can choose to follow neighborhoods and dining spots you want to try so you'll always have an idea for your next outing. Information about that new sandwich spot downtown, the surprise pop-up from your favorite chef, or that new bakery shaking up the pastry scene in Paris will now come straight to you.

You'll start to see these features rolling out globally on Android and iOS in the coming months.

Finally, Google also teased a potential future feature that taps into your phone's camera to drastically change how the walking directions function. Blending "computer vision" with machine learning from the cloud transforms Maps into a Street View-like augmented reality experience. Holding up your camera still show a tiny sliver of the map , but also the world around you-and the world is overlaid with visual directions to your destination, as well as pop-up cards displaying info of any businesses you pass. You might even be able to add a virtual guide to your destination.

The company didn't announce any plans for the AR revamp's rollout.