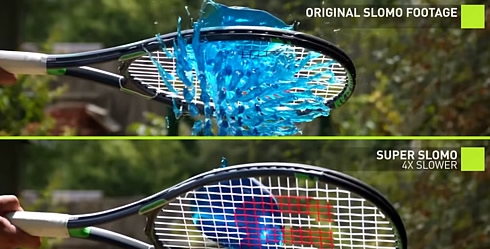

Nvidia Uses AI to Produce High-quality, 240fps Slow-motion Video From 30fps Source

NVIDIA has managed to create a high-quality slow motion footage using deep learning and a 30 frames-per-second video as a source.

Obviously, the creation of slow-motion video requires capturing a large number of frames per second. In case you are not having enough frames, the produced slow motion video is choppy.

NVIDIA used artificial intelligence to fill in the gap and actually 'imagine' the extra frames. The system, which was presented at the University of California and the University of Massachusetts, looks at two different frames and then creates intermediary footage by tracking the movement of objects from one frame to the next.

The system is based on multiple Telsa V100 GPUs and the cuDNN deep learning library, using the PyTorch of deep learning framework. It was 'trained' with content showing sports activities shot at 240fps. It then managed to predict up to seven intermediary frames for each missing ones, in order to create a 240fps slow motion video.

Nvidia's technique looks faster and easier than existing methods for faking slow-motion footage.