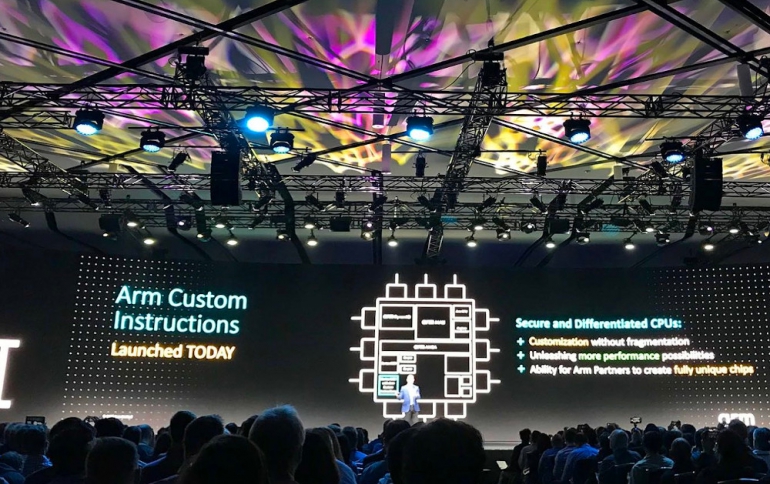

Arm Enables Custom Instructions for Embedded CPUs

Today at Arm TechCon 2019, Arm CEO Simon Segars announced Arm Custom Instructions, a new feature for the Armv8-M architecture. The company is joining with automakers General Motors Co and Toyota Motor Corp to establish common computing systems for self-driving cars.

Arm Custom Instructions

Arm Custom Instructions will initially be implemented in Arm Cortex-M33 CPUs starting in the first half of 2020 at no additional cost to new and existing licensees, enabling SoC designers to add their own instructions for specific embedded and IoT applications without risk of software fragmentation.

The Arm Cortex-M33 CPU is an Arm-v8 core with DSP, Arm Trustzone technology for security and Memory Protection for task isolation.

“A world of a trillion secure intelligent devices will be built on a diversity of complex use cases requiring increased synergy between hardware and software design,” said Dipti Vachani, senior vice president and general manager, Automotive and IoT Line of Business, Arm. “We have engineered Arm Custom Instructions to fuel closer hardware and software co-design efforts toward achieving application-specific acceleration while unlocking greater device differentiation.”

Architected as part of the evolution of the Armv8-M architecture with secure Arm TrustZone technology, Arm Custom Instructions give chip designers the opportunity to push performance and efficiency further by adding their unique application-specific features into Cortex-M33 CPUs.

Arm Custom Instructions are enabled by modifications to the CPU that reserve encoding space for designers to add custom datapath extensions while maintaining the integrity of the existing software ecosystem. This feature, together with the existing co-processor interface, enable Cortex-M33 CPUs to be extended with various types of accelerators optimized for edge compute use cases including machine learning (ML) and artificial intelligence (AI).

Arm will offer Custom Instructions as a standard feature in future Cortex-M CPUs, which are among the most successful Arm CPUs ever, having shipped in more than 50 billion chips from Arm silicon partners to-date.

Custom instructions are not new to CPU architecture, but there has always been a trade-off between design complexity and software and tools compatibility. Custom instructions can lead to expensive engineering costs as well as a fragmented software ecosystem.

Workload-specific optimization has been part of the Arm story forever, whether in the CPU instruction set or in dedicated coprocessors, such as GPUs (graphics processing units) and NPUs (neural processing units, also known as AI accelerators). A coprocessor interface has been part of several Arm CPU designs, enabling partners to introduce their own accelerators. These are essentially parallel computing engines external to the CPU. They address many use cases, but there are those that would be better-served by a finer-grained level of integration, with custom logic interleaved with standard CPU operation.

This is exactly what Arm Custom Instructions achieves: Establishing the Arm CPU as a chassis that the company's partners can use to differentiate with their own technology.

Collaborating for an autonomous future

Arm is joining with automakers General Motors Co and Toyota Motor Corp to establish common computing systems for self-driving cars, an effort the companies hope will speed development of the technology.

Arm's ties to the automotive industry go back to the late 1990s, when automakers began to add computer chips to vehicles for functions like engine control and diagnostics.

On Tuesday the company said it was helping to create the Autonomous Vehicle Computing Consortium, or AVCC, along with the two automakers and industry suppliers Bosch Corp, Denso Corp and Continental AG. Also helping found the group are semiconductor companies Nvidia Corp and NXP Semiconductors, both of which embed Arm’s technology into their chips.

The AVCC will be an independent group funded by membership fees from the companies that join. Arm officials said its work will be open to non-members.

The group’s first task will be to establish a common computing architecture. That effort aims to make it easier for car companies to write software that will work on chips from different vendors, similar to how Microsoft Windows-based software works on processors from Intel or Advanced Micro Devices Inc.

A Total Compute approach

Arm executives also today talked about the multiple transformations our industry is facing, from challenges in process scaling and data privacy, to a very fragmented ecosystem. While 5G will create a world of opportunity, ARM says that we still need to deliver the power, performance, and efficiency for the next generation of immersive experiences.

Along with 5G, the acceleration of AI, xR, and IoT are changing compute requirements. The performance we need for digital immersion is going to have to push beyond what we have today, towards the world of Total Compute. This requires a very different approach to how we design our IP, with a deep focus on optimizing performance, security, and developer access.

Since ARM announced the Cortex-A73, the company has gradually increased machine learning (ML) performance generation-over-generation and today, ARM is working to broaden its CPU coverage for ML. AMR today added Matrix Multiply (MatMul) to its next-generation Cortex CPU, “Matterhorn”, effectively doubling ML performance over previous generations.

Beyond the CPU, the Total Compute approach should be applied to each compute element and its infrastructure within the system. Whether it’s an Arm CPU, GPU, NPU, interconnect, or system IP, it must be optimized as an integrated solution. And this relies on the software and tools to make it happen, including Arm NN, the Arm Compute Library, the open source community, and open standards, all built on a secure foundation.

We are increasingly relying on our mobile phone as the hub for all our personal information, and you can’t have privacy without security. Security within Total Compute is based on layers:

Securing the digital world

ARM is already starting to roll out security features like Memory Tagging Extension (MTE), as part of Total Compute, to meet the various needs of its customers. In fact, Google recently announced their plans to partner with ARM to design MTE for Android devices. These features, combined with Platform Security Architecture (PSA), will help to standardize and defragment security across the entire ecosystem.

Enabling developers under a common architecture

As part of Arm’s commitment to developer enablement, ARM announced an expanded partnership with Unity Technologies. Arm and Unity will work together to further optimize performance on Arm-based SoCs, CPUs, and GPUs allowing developers to spend more time creating new content.