Hot Chips Symposium Coming August 18th, AI Takes a Front-row Seat

The Hot Chips Symposium will return to Stanford August 18-20 with the latest high performance “big iron” server processors and also a new crop of special-purpose silicon for Artificial Intelligence and Deep Learning, and more.

Hot Chips will showcase silicon targeting deep learning training and inference, graphics, embedded systems, automotive, memory and interconnect.

The program has just been announced and features a diverse set of Artificial Intelligence silicon disclosures from big companies like Intel, Huawei, Nvidia and Xilinx, but also new startup silicon from Cerebras, Habana, and Wave Computing. Academia is also in the mix with a presentation from Princeton University.

The program also includes two keynotes from AMD and TSMC. AMD’s CEO, Lisa Su, will speak on high performance computing. And Dr. Philip Wong, TSMC’s VP of Research and Development will share interesting insights into the silicon industry and its future.

Hot Chips will bring the top companies not only building the next generation of intelligent chips, but also focusing on systems “at scale”:

- Cerebras: Wafer scale deep learning

- Habana: Scaling AI training

- Huawei: Unified architecture for Neural Network computing

- Intel: Deep Learning Training at Scale

- Nvidia: MCM-based deep neural network accelerator

- Wave Computing: MIPS Triton AI processing platform.

- Xilinx: Versal AI engine

Cerebrus will describe a much-anticipated device using wafer-scale integration. Habana, already shipping an inference chip, will show its follow-on for training.

UpMem will disclose a new processor-in-memory, believed to be using DRAM, aiming at multiple uses.

The startups will compete with giants such as Intel, which is describing Spring Hill and Spring Crest, its inference and training chips based on its Nervana architecture. Alibaba will disclose an inference processor for embedded systems.

In addition, Huawei, MIPS, Nvidia, and Xilinx will provide new details on their existing deep-learning chips. Members of the MLPerf group are expected to describe their inference benchmark for data center and embedded systems, a follow-on to their training benchmark.

A senior engineer from Huawei is exoected to talk about its Ascend 310/910 AI chips. However, given that the company is in the crosshairs of the U.S./China trade war, it’s unclear whether the speaker will be able to get a visa or will be confronted with other obstacles.

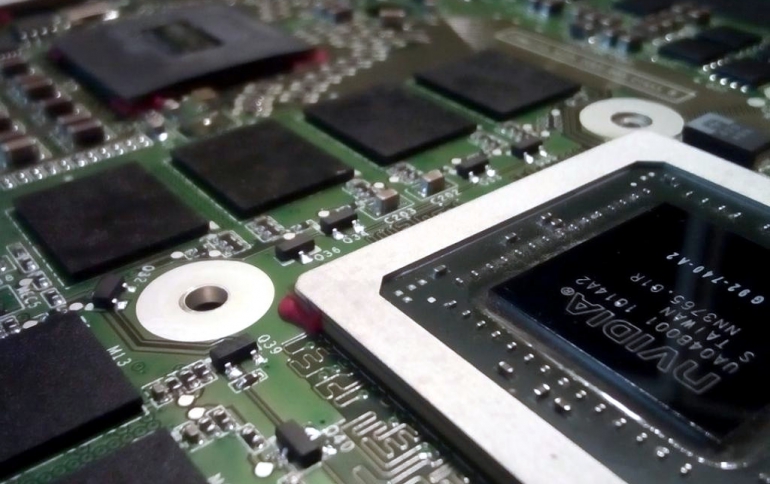

Nvidia dominates the market for AI training chips with its V100.The company will describe a research effort on a multi-chip module for inference tasks that it says delivers 0.11 picojoules/operation across a range of 0.32–128 tera-operations/second.

Google will describe details of its liquid-cooled, third-generation TPU. A representative of Microsoft’s Azure will discuss its next-generation FPGA hardware. And a member of Amazon’s AWS will cover its I/O and system acceleration hardware.

A Facebook engineer will describe Zion, its multiprocessor system for training announced at the Open Compute Summit earlier this year.

AMD will discuss Zen2, its next-generation x86 core for both client and server systems. IBM will present a next-generation server processor, believed to be the Power 10.

Electric car maker Tesla will provide more details on its recent disclosure of silicon for self-driving cars. In separate talks, Intel will give more details on its Optane memories and its emerging packaging technologies.

Hewlett-Packard Enterprise will describe the first chipset for GenZ, an open interface for distributed memory and storage, agnostic to the many emerging memory architectures. Separately, AyarLabs will describe its TeraPHY high-speed interconnect.

AMD ( 7nm “Navi” GPU) and Nvidia (RTX ON: The NVIDIA Turing GPU Architecture) will close the conference with discussions of their latest GPUs geared for high-performance computing.