NVIDIA Says New TITAN V GPUs Transform the PC into AI Supercomputer

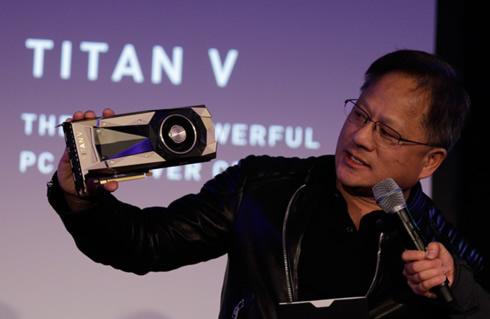

NVIDIA CEO Jensen Huang on Thursday lit up a gathering of deep learning researchers at the Conference and Workshop on Neural Information Processing Systems (NIPS) in Long Beach, Calif., by unveiling TITAN V, Nvidia's latest GPU.

"What NVIDIA is all about is building tools that advance computing, so we can do things that would otherwise be impossible," Huang, dressed in his trademark black leather jacket, told the crowd as he kicked off the evening. "Our ultimate purpose is to build computing platforms that allow you to do groundbreaking work."

Twenty of the researchers received one of the first TITANs based on the company's latest Volta architecture.

Volta architecture, Nvidia's seventh-generation GPU architecture, features a redesign of the streaming multiprocessor that is at the center of the GPU. It doubles the energy efficiency of the previous generation Pascal design, enabling dramatic boosts in performance in the same power envelope.

New Tensor Cores designed for deep learning deliver up to 9x higher peak teraflops. With independent parallel integer and floating-point data paths, Volta is also much more efficient on workloads with a mix of computation and addressing calculations. Its new combined L1 data cache and shared memory unit improve performance while also simplifying programming.

The Titan V and Tesla V100 rock 21 billion transistors and 5,120 CUDA cores running at 1,455MHz. That downright dwarfs Pascal's flagship data center GPU, the Tesla P100, which packs 15 billion transistors and 3,840 CUDA cores running at a slightly faster 1,480MHz maximum clock speed. The TITAN V delivers 110 teraflops of raw horsepower, Huang told the crowd.

Fabricated on a new TSMC 12-nanometer FFN high-performance manufacturing process customized for NVIDIA, TITAN V also incorporates Volta's 12GB HBM2 memory subsystem for advanced memory bandwidth utilization.

Moving into specifics, the Titan V uses Nvidia's GV100 GPU - the same as the Tesla V100 PCIe. It has the GV100 GPU's full FP64 compute and tensor core performance. However, compared to the Tesla V100 offering, it has not the memory capacity, memory bandwidth, and lacks the NVLink functionality.

The Titan V features 80 streaming multiprocessors (SMs) and 5120 CUDA cores, the same amount as its Tesla V100 siblings. it has 12GB of HBM2 attached via a 3072-bit memory bus. L2 cache is 4.5 MB. In terms of clockspeeds, the HBM2 has been downclocked slightly to 1.7GHz, while the 1455MHz boost clock actually matches the 300W SXM2 variant of the Tesla V100.

The card features a vapor chamber cooler with copper heatsink and 16 power phases, all for the 250W TDP that has become standard with the single GPU Titan models. It also brings 3 DisplayPorts and 1 HDMI connector.

NVIDIA has been selling Tesla V100 PCIe card for about $10,000. The Titan V goes on sale for much less, priced at $3000.

Along with Nvidia's earlier generation TITAN Xp GPU, TITAN V will be supported by our NVIDIA GPU Cloud - or NGC - giving users instant access to Nvidia's deep learning software stack.

But make no mistake: This monster targets data scientists, with a tensor core-laden hardware configuration designed to optimize deep learning tasks. You won't want to buy this $3,000 GPU to play games.

A gamer's question would be when we can expect to see Volta-based GeForce graphics cards - a Volta-based GTX 1080 Ti successor anyone?