Google's Augmented Reality SDK ARCore 1.0 Released, Google Lens Updated

Google has released ARCore 1.0, letting anyone publish Android apps that take advantage of the toolkit to meld virtual objects with the real world.

ARCore enables developers to build apps that can understand your environment and place objects and information in it. Google Lens uses your camera to help make sense of what you see, for example, automatically creating contact information from a business card before you lose it. At Mobile World Congress, Google is launching ARCore 1.0 along with new support for developers, and the company is releasing updates for Lens and rolling it out to more people.

ARCore, Google's augmented reality SDK for Android, is out of preview and launching as version 1.0. Developers can now publish AR apps to the Play Store. ARCore works on 100 million Android smartphones, and AR capabilities are available on all of these devices. It works on 13 different models right now (Google's Pixel, Pixel XL, Pixel 2 and Pixel 2 XL; Samsung's Galaxy S8, S8+, Note8, S7 and S7 edge; LGE's V30 and V30+ (Android O only); ASUS's Zenfone AR; and OnePlus's OnePlus 5). And beyond those available today, Google is partnering with many manufacturers to enable their upcoming devices this year, including Samsung, Huawei, LGE, Motorola, ASUS, Xiaomi, HMD/Nokia, ZTE, Sony Mobile, and Vivo.

ARCore 1.0 features improved environmental understanding that enables users to place virtual assets on textured surfaces like posters, furniture, toy boxes, books, cans and more. In addition, the Android Studio Beta now supports ARCore in the Emulator, so developers can quickly test their apps in a virtual environment right from their desktops.

Google will be supporting ARCore in China on partner devices sold there - starting with Huawei, Xiaomi and Samsung - to enable them to distribute AR apps through their app stores.

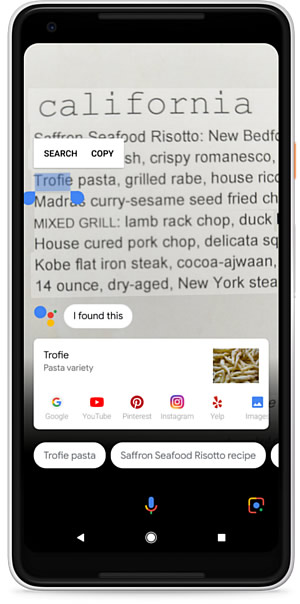

With Google Lens, your phone's camera can help you understand the world around you, and Google is expanding availability of the Google Lens preview. With Lens in Google Photos, when you take a picture, you can get more information about what's in your photo. In the coming weeks, Lens will be available to all Google Photos English-language users who have the latest version of the app on Android and iOS. Also over the coming weeks, English-language users on compatible flagship devices will get the camera-based Lens experience within the Google Assistant.

Since launch, Google has added to Google Lens text selection features, the ability to create contacts and events from a photo in one tap. In the coming weeks, Gogole Lens will also offer improved support for recognizing common animals and plants, like different dog breeds and flowers.