1. Introduction

Writing Quality - Page 1

- Introduction

We have all heard the familiar clicks and skips from our CD Audio Player that

result from a scratched, damaged, or dirty CD disc. Sometimes the effects of

disc defects can be subtle and result in audio distortion instead of obvious

click and skips. This distortion results from the inability of standard CD player's

error correction codes (ECC) to correct for the ablation of the underlying audio

information by a scratch defect or electrical noise.

In fact, when the CD ECC fails, many CD drives have special circuitry called

"error concealment" circuitry to conceal the errors that ECC was unable

to fix. The error concealment circuitry introduces distortion while it tries

to interpolate through the errors. Many types if error concealment circuity

will even insert audio noise when activated to mask the errors. This is hardly

what lovers of high fidelity audio reproduction seek.

In order to complete this article we used information from:

- Calimetrics (http://www.calimetrics.com)

- ECMA (http://www.ecma.ch/)

- Kelin Kuhn's paper (http://www.ee.washington.edu/conselec/CE/kuhn/cdaudio/95x6.htm)

- Clover Systems (http://www.cloversystems.com)

- YAMAHA Japan (http://www.yamaha.co.jp/computer)

- Plextor Europe (http://www.plextor.be)

- Pioneer Japan (http://www.pioneer.co.jp)

- Quantized (http://www.quantized.com)

- MS Science (http://www.mscience.com)

- Philips (http://www.licensing.philips.com)

- Advanced Surface Microscopy (http://www.asmicro.com/)

- Eclipse Data (http://www.eclipsedata.com)

- MMC Draft Standard (http://www.t10.org)

- JVC Japan (http://www.victor.co.jp/products/others/ENCK2.html)

2. Pits and Lands

- General information about Pits and Lands

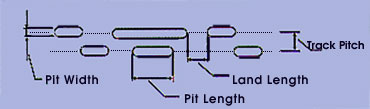

An enlarged view on top of a CD shows a picture like this.

Pit structure

In a CD-player, a laser beam with a specific wavelength detects the digital

code by determining the lengths of the pits and the lands. Therefore, it is

important that the shape of the pits as well as the intervals meet the necessary

preconditions. In the pit structure the next basic parameters can be recognized:

Typical pit parameters

Each pit is approximately 0.5 microns wide and 0.83 microns to 3.56 microns

long. (Remember that the wavelength of green light is approximately 0.5 micron)

Each track is separated from the next track by 1.6 microns. The leading and

trailing edge of the pits represent ones and the length of the pits represents

the number of zeros. The space between the pits, called lands are also of varying

lengths representing only zeros.

The CD laser 'reads' the pit-information by processing the reflected wave signals.

The reflection is caused by the aluminium layer of the CD. The laser beam that

is focussed on the pit track 'recognizes' the transition between pits and lands.

Thus not the pits or lands itself but the pit edges are responsible for data

information. The pits are encoded with Eight-to-Fourteen Modulation

(EFM) for greater storage density and Cross-Interleave Reed-Solomon

Code (CIRC) for error correction.

3. Error Correction - Page 1

Writing Quality - Page 3

Error Correction - Page 1

- Introduction

With analogue audio, there is no opportunity for error correction. With digital

audio, the nature of binary data lends itself to recovery in the event of damage.

When audio data is stored, it can be specially coded and accompanied by redundancy.

This enables the reproduced data to be checked for error. Error correction is

an opportunity to preserve data integrity, and it is absolutely necessary to

ensure the success of digital audio storage. With proper design, CD and DVD

can be reduced to an error rate of 10^-12, that is, less than one uncorrectable

error in 10^12 bits.

- Sources of Errors

Optical media can affected by pit asymmetry, bubbles or defects in substrate,

and coating defects. The most significant cause of error in digital media is

dropouts, essentially a defect in the media that causes a momentary drop in

signal strength. Dropouts can be traced to two causes: a manufactured defect

in the media or a defect introduced during use. A loss of data or invalid data

can provoke a click or pop. An error in the least significant bit of PCM word

might pass unnoticed, but an error in the most significant bit word would create

a drastic change in amplitude.

- Seperation of Errors

Errors that have no relation to each other are called random-bit errors.

A burst error is a large error, disrupting perhaps thousands of bits.

An important characteristic of any error-correction system is the burst length,

that is, the maximum number of adjacent erroneous bits that can be corrected.

Error-correction design is influenced by the kind of modulation code used to

convey the data. With normal wear and tear, oxide particles coming form the

backing and other foreign particles, such as dust, dirt, and oil from fingerprints,

can contribute to the number of dropouts. The scratches perpendicular

to tracks are easier to correct, but a scratch along one track could

be impossible to correct.

- The bit-error rate (BER) is the number of bits received in error

divided by the total number of bit received. An optical disc system can obtain

error-correction algorithms able to handle a BER of 10^-5 to 10^-4.

- The block error rate (BLER) measures the number of blocks or frames

of data per second that have at least one occurrence of uncorrected data.

- The burst-error length (BERL) counts the number of consecutive blocks

in error.

- Objectives of errors correction

Redundancy alone will not ensure accuracy of the recovered information; appropriate

error detection and correction coding must be used. An error-correction system

comprises three operations :

- Error detection uses redundancy to permit data to be checked for validity.

- Error correction uses redundancy to replace erroneous data with newly calculated

valid data.

- In the event of large errors or insufficient data for correction, error

concealment techniques substitute approximately correct data for invalid data.

4. Error Correction - Page 2

Writing Quality - Page 3

Error Correction - Page 2

- Errors Detection - Single bit parity

All error-detection and correction techniques are based on the redundancy of

data. Practical error detection uses technique in which redundant data is coded

so it can be used to efficiently check for errors. The technique of casting

out 9s can be used to cast out any number, and forms the basis for a binary

error detection method called parity. Given a binary number, a residue

bit can be formed by casting out 2s. This extra bit, known as a parity bit,

permits error detection, but not correction.

An even parity bit is formed with a simple rule : if the number of 1s in the

data word is even, the parity bit is a 0; if the number of 1s in the word is

odd, the parity bit is a 1. An 8-bit data word, made into a 9-bit word with

an even parity bit, will always have an even number of 1s.

- Cyclic Redundancy Check Code

The cyclic redundancy check code (CRCC) is an error detection method

preferred in audio applications. The CRCC is cyclic block code that generates

a parity check word. In 1011011010, the six binary 1s are added together to

form binary 0110 (6), and this check word is appended to the data word to form

the code word for storage.

- Error Correction Codes

With the use of redundant data, it is possible to correct errors that occur

during storage or transmission. However, there are many types of codes, different

in their designs and functions. The field of error-correction codes is highly

mathematical one. In general, two approaches are used: block codes using algebraic

methods, and convolution codes using probabilistic methods. In some cases, algorithms

use a block code in a convolution structure known as a cross-interleave code.

Such codes are used in the CD format.

- Interleaving

Error correction depends on an algorithm's ability to efficiently use redundant

data to reconstruct invalid data. When the error is sustained, as in the case

of a burst

error, both the data and the redundant data are lost, and correction becomes

difficult or impossible. Data is interleaved or dispersed through the data stream

prior to storage or transmission. With interleaving, the largest error that

can occur in any block is limited to the size of the interleaved section. Interleaving

greatly increases burst error correctability of the block codes.

- Cross interleaving

Although the burst is scattered, the random errors add additional errors in

a given word, perhaps overloading the correction algorithm. One solution is

to generate two correction codes, separated by an interleave and delay. When

block codes are arranged in rows and columns two dimensionally, the code is

called a product code (in DVD). When two block codes are separated by both interleaving

and delay, cross-interleaving results. A cross-interleaved code comprises two

(or more) block codes assembled with a convolutional structure.

The above picture shows a Cross Interleaving encoder as used in the CD format.

Syndromes from the first block are used as error pointers in the second block.

In the CD format, k2=24, n2=28, k1=28 and n1=32; the C1 and C2 codes are Reed-Solomon

codes.

5. Error Correction - Page 3

Writing Quality - Page 5

Error Correction - Page 3

- Error Concealment

A practical error-correction method allows severe errors to remain uncorrected.

However, subsequent processing - an error-concealment system - compensates for

those errors and ensures that they are not audible.

There are two kinds of uncorrectable errors :

- Detected but not corrected : can be concealed with properly designed concealment

methods.

- Undetected and miscorrected : cannot be concealed and might result in an

audible click in the audio output.

- Interpolation

Following de-interleaving, most errors are interspersed with valid data word.

It is thus reasonable to use techniques in which surrounding valid data is used

to

calculate new data to replace the missing or incorrect data.

- Zero-order or pervious-value interpolation : Interpolation holds the previous

sample value and replaces it to cover the missing or incorrect sample.

- First-order or linear-order interpolation : the erroneous sample is replaced

with a new sample derived from the mean value of the previous and subsequent

samples.

In many digital audio systems, a combination of zero- and first-order interpolation

is used. Other higher-order interpolation is sometimes used.

- Muting

Muting is the simple process of setting the value of missing or uncorrected

words to zero. Muting might be used in the case of uncorrected errors, which

would otherwise cause an audible click at the output. Also in the case of severe

data damage or player malfunction, it is preferable to mute the data output.

To minimize audibility of a mute, muting algorithms gradually attenuate the

output signal's amplitude prior to a mute, and then gradually restore the amplitude

afterward. Such muting for 1 to 4 ms durations cannot be perceived by the human

ear.

6. CIRC - Page 1

Writing Quality - Page 6

CIRC - Page 1

- Reed-Solomon Codes

For compact discs, Reed-Solomon codes are used and the algorithm is known as

the Cross-Interleave Reed-Solomon code (CIRC). Reed-Solomon codes exclusively

use polynomials derived using finite field mathematic known as Galois Field

to encode and decode block data. Either multiplication or addition can be used

to combine elements, and the result of adding or multiplying two elements is

always a third element contained in the field. In addition, there exists at

least one element called a primitive such that every other element can be expressed

as a power of this element.

The size of the Galois Field, which determine the number of symbols in the

code, is based on the number of bits comprising a symbol; 8-bit symbols are

commonly used. A primitive polynomial often used in GF (28) systems is x^8+x^4+x^3+x^2+1.

The code can use the input word to generate two types of parity, P and Q. The

P parity can be a modulo 2 sum of the symbols. The Q parity multiplies each

input word by a different power of the GF primitive element. If one symbol is

erroneous, the P parity gives a nonzero syndrome S1. The Q parity yields a syndrome

S2. By checking this relationship between S1 and S2, the RS code can locate

the error. Correction is performed by adding S1 to the designated location.

Reed-Solomon codes are used for error correction in the compact disc, DAT,

DVD, direct broadcast satellite, digital radio and digital television applications.

Read more about Reed-Solomon Code over here.

- Cross-Interleave Reed Solomon Code

The particular error correction code used in the CD system is called the Cross

Interleave Reed-Solomon Code, or CIRC, followed by EFM.

CIRC (Cross Interleaved Reed-Solomon Code) is the error detection and

correction technique used on a CD (and on DVD for that matter). It is a variant

of the more general BNC codes. These codes are actually Bytes added during premastering

or recording to the normal data bytes for achieving error free reading of a

disc. The CIRC bytes are present in all CD modes (audio and data alike). The

whole error correction method that makes use of these added CIRC bytes is commonly

referred to as the CIRC based algorithm.

While the laser head reads the bits out of the disk surface introducing one

erroneous bit out of 10^-6, the basic level of error correction provided for

the Audio CD by CIRC results to only one unrecoverable bit out of every 10^-9

bits read! CD-ROM provides additional protection for data (ECC/EDC), the so-called

layer 3 (L3) error correction, reducing this error rate to just one bit out

of 10^-12 bits!

Let's see how this is important. One data bit corresponds to 3.0625 so-called

"channel bits". These are the ones that are actually recorded onto

a disc surface. A whole disk is 333,000 sectors long. Each sector contains 2,048

data bytes. By performing the necessary simple arithmetic calculations, we can

easily see that every data disk would be unreadable without the implementation

of both the above error correcting codes.

CIRC corrects error bursts up to 3,500 bits (2.4 mm in length) and compensates

for error bursts up to 12,000 bits (8.5 mm) such as caused by minor scratches.

The CIRC is designed such that the C1 code corrects most of the random errors

and C2 code corrects most of the burst errors. The interleaver between the C1

and C2 codes is performed to make it easier for the C2 Reed-Solomon code to

correct burst errors. Interleaving also protects against burst errors appearing

in the Audio steam: A long burst due to a failed C2 creates short audio errors.

For a detailed explanation of the CIRC you can read the ECMA 130 standard.

7. CIRC - Page 2

Writing Quality

- Page 7

CIRC - Page 2

- How CIRC works?

CIRC uses two distinct techniques in order to achieve this remarkable ability

to detect and correct erroneous bytes. The first is redundancy. This means that

extra data is added, which offers an extra chance for reading it. For instance,

if all data were recorded twice, you would have twice as good a chance of recovering

the correct data. The CIRC has a redundancy of about 25%; that is, it adds about

25% additional data. This extra data is cleverly used to record information

about the original data, from which it is possible the missing information to

be deduced.

The other technique used during the implementation of CIRC is interleaving.

This means that the data is distributed over a relatively large physical area

of the disk. If the data were recorded sequentially, a small defect could easily

wipe out an entire word (byte). With CIRC, the bits are interleaved before recording,

and de-interleaved during playback. One data block (frame) of 24 data bytes

is distributed over 109 adjacent blocks. To destroy one byte, you would have

to destroy these other bytes. With scratches, dust, fingerprints, and even holes

in the disc, there is usually enough data left to reconstruct any data bytes

that have been damaged or caused the disk to become unreadable.

What happens is that the bits of individual words are mixed up and distributed

over many words. Now, to completely obliterate a single byte, you have to wipe

out a large area. Using this scheme, local defects destroy only small parts

of many words, and there is (hopefully) always enough left of each sample to

reconstruct it. To completely wipe out a data block would require a hole in

the disc of about 2 mm in diameter.

Moreover, the Reed-Solomon codes used for both levels of error correction are

specifically designed for cutting-off "burst" errors, not just those

systematic ones that usually come in the form of random errors, the so called

Gaussian or white noise.

8. CD Decoding system

Writing Quality

- Page 8

CD Decoding system

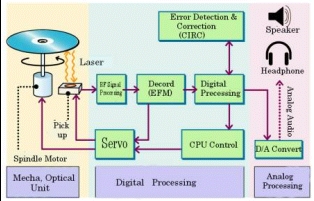

The following pictures show the CD decoding system in detail.

The RF signal is sent to the EFM decoder where the EFM channel bit stream is

decoded and passes to the first stage of the CIRC decoder. After the C1 decoder,

the data interleave is removed and passed to the final stage of the CIRC.

If the C2 decoder data can be decoded, the data is then de-interleavered and

driven to the Error concealment and after the D/A to speakers. In case of CD-ROMs

the data is de-scrambled (additional interleave stage) before driven to the

Error concealment circuit.

If the C2 decoder fails, it flags the errors for the error concealment circuitry

where the remaining errors are concealed before being passed to the digital

to analog converter and finally to your speakers. Only rarely (about once every

125 hours), is the CIRC decoder in unable to identify and flag an error for

the concealment circuitry. When this happens, an audible click is often heard.

The digital audio stream is error-free unless the CIRC determines that a C2

codeword is contaminated by an error pattern that it cannot correct. If the

24 bytes were flagged wrong, then it would turn 6 samples wrong at once, on

both channels. If all the audio data that lie adjacent to the audio samples

in the uncorrectable C2 codeword are reliably recovered from the disc, then

the values of the un-reliable audio samples are interpolated.

In case of Data discs, CD-ROM chipsets use the C2 error flag to mark data going

into ECC layer 3 so that they can use erasure correction for improved performance,

similar to the C1 flag being used to mark erasures for C2.

A more detailed explanation of the various strategies used in the C1/C2 decoders

follows (source: Digital-Inn):

"…Assuming there are no input flags from the EFM decoder (the normal

situation), C1 can detect and correct up to 2 symbols (bytes). However the exact

performance is determined by the strategy employed. The two basic strategies

are (a) correct 1 symbol or (b) correct two symbols.

In (a), the decoder with detect and correct a frame with one errored symbol.

It will accurately detect frames with 2 or 3 errored symbols. It will mostly

but not always detect frames with 4 or more errored symbols (see later). For

frames with one errored symbol the flags will all be set to definitely correct.

For frames with 2 or 3 errored symbols, there are two possible strategies; either

marks the detected errors as possibly incorrect or mark the whole frame all

possibly incorrect. The second possibility is usually employed mainly due to

accuracy; basically it would be as inaccurate as the 2 symbol scheme without

the increased correction capability. For frames with 4 or more errors, all symbols

in the frame will be flagged as possibly incorrect as there is no way of differentiating

the correct from the incorrect symbols.

In (b), the decoder will reliably detect and correct frames with one or two

errored symbols. Frames with 3 errored symbols will either be correctly detected

(generally resulting in the whole frame being marked as unreliable) or will

be misdetected as having 2 errors and will be incorrectly corrected resulting

in 5 unflagged errors being passed to C2. For 4 errors, there is a slightly

possibility (2^-19) that C1 will misinterpret this as 1 error again resulting

in 5 undetected errors being passed to C2 (the same as the 1 correction strategy),

otherwise 4 or more errors will be detected and the whole frame will be marked

as unreliable.

C2 uses the original information and the flags to try to detect all errors

undetected or miscorrected by C1; here the strategies become more divergent.

Unfortunately the manufacturers are not giving this information away.

There are other strategies (including multiple pass ones) and it is also possible

to apply many strategies at once and then decide which to use based on the results…"

9. C1/C2 Errors - Page 1

Writing Quality

- Page 9

C1/C2 Errors

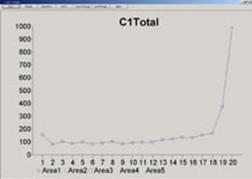

- Measuring C1/C2 errors

For measuring C1 and C2, industry has established a testing methodology. Those

two measurements can be placed in the "Data Channel" tests, according

to the CD standard measuring methods. Data Channel tests are concerned with

the integrity of the decoded data from the disc in terms of the amount of and

severity of errors on the disc. This is a good overall indication of disc quality,

however, when there are underlying problems causing high error rate the root

of the problem can be found by looking at other tests.

In CD terminology, errors are usually mentioned as Exy, where x denotes the

number of bytes containing an error and y denotes the decoder stage (1 or 2).

- C1 Decoder

At the lowest level, a CD-ROM drive reads EFM frames from the CD. An EFM frame

consists of 24 user data bytes, 1 Subcode and 8 P&Q parity bytes. When reading

data from a CD, the EFM data is de-modulated and the 24-bytes of user data are

passed through the CD drive's C1 and C2 decoders:

- C1 is a (32, 28) code producing four P parity symbols. P parity is designed

to correct single-symbol errors and detect and flag double and triple errors

for Q correction.

- C2 is a (28, 24) code, that is the encoder input 24 symbols, and outputs

28 symbols, including 4 symbols of Q parity. Q parity is designed to correct

one erroneous symbol, or up to four erasures in one word.

Using four P parity symbols, the C1 decoder corrects random errors and detect

burst. The C1 decoder can correct up to four symbols if the error location is

known, and two symbols can be corrected if the location is not known. Three

error counts (E11, E21 and E31) are measured at the output of the C1 decoder.

- E11: the frequency of occurrence of single symbol (correctable) errors

per second in the C1 decoder.

- E21: the frequency of occurrence of double symbol (correctable) errors in

the C1 decoder.

- E31: the frequency of triple symbol, or more, (un-correctable) errors in

the C1 decoder. This block is uncorrectable at the C1 stage, and is passed

to the C2 stage.

C1 is defined as the sum of E11+E21+E31 per second within the inspection range.

The unit for this measurement is [number]. The block error rate (BLER) equals

with the sum of E11 + E21 + E31 per second averaged over ten seconds.

- C2 Decoder

Given pre-corrected data, and help from de-interleave, C2 can correct burst

errors as well as random errors that C1 was unable to correct. When C2 cannot

accomplish correction, the 24 data symbols are flagged as an E32 error and passed

on for interpolation.

In the case of audio data, the E32 error is also referred to as a CU. When

CU errors occur on audio, the 24-bytes are passed on to a concealment circuit

on the CD drive. This circuit uses different methods to conceal the error so

that it does not cause audible effects such as a pop or a click. If too many

bytes are corrupt or if there are many CUs in a row, it is possible that even

the concealment circuit will not be able to conceal the error and a pop or click

may be heard during playback.

There are three error count ( E12, E22, and E32 ) at the C2 decoder.

- E12 count indicates the frequency of occurrence of a single symbol (correctable)

error in the C2 decoder. A high E12 is not problematic because one E31 error

can generate up to 28 E12 errors due to interleaving. Each C1 byte is sent

in a different C2 frame, therefore never affecting more than one byte in any

C2 frame

- E22 count indicates the frequency of double symbol (correctable) error in

the C2 decoder. E22 errors are the worst correctable errors.

- E32 count indicates triple-symbol, or more, (un-correctable) errors in the

C2 decoder. E32 should never occur on a disc.

C2 is defined either as the total of

- E32 per second within the inspection range for some manufacturers

- E12+E22 per second within the inspection range for other manufacturers

The unit for this measurement is [number]. The E21 and E22 signals can be combined

to form a burst error (BST) count. This count is often tabulated as a

total number over an entire disc.

Based upon Exy errors, we can define new measurements like BEGL

(Burst Error Greater than Length). The Red Book specifies that the number of

successive C1 uncorrectable frames must be less than seven. Therefore, the threshold

for BEGL is set to 7 frames and any instance where the Burst Error Length is

7 or more frames, counts as a BEGL error. Good discs should not generate BEGL

errors, because error bursts of this length could produce E32 errors.

External Links: There is an interesting article that explains how to calculate

the internal audio error correction ability of a CD ROM drive over here.

- Comparison with DVD

In the DVD format, the Block Error Rate, Burst Error Length, and E-flags, E11-E32,

are replaced by simple counts of parity errors, PI and PO. The error detection

/ correction scheme used in the DVD system is still a Reed-Solomon code but

it is conceptually much simpler. There is only one error detection / correction

code resulting in only one specification for inner parity errors, Pl< 280/(8

ECC Blocks).

10. C1/C2 Errors - Page 2

Writing Quality

- Page 10

C1/C2 Errors - Page 2

- E32 errors

There are some drives that can correct even up to four bad symbols at the second

stage. However for the majority of the tests, we consider E32 an uncorrectable

error, even though some drives may be able to correct it. The quality of our

recorded media will decrease with regular use and aging. Discs with already

E22 or E32 will not leave adequate margin for future degradation.

Uncorrectable errors may not be a problem on audio discs, since the player's

interpolation circuitry will hide these errors. Some players can interpolate

over up to eight consecutive bad samples.

Uncorrectable errors do not necessarily make a CD-ROM unusable either. Errors

that are uncorrectable in the main CIRC correction stage may still be corrected

by the EDC/ECC sector level error correction used on CD-ROM's. Therefore, the

data may still be recoverable, and can still verify if you are comparing to

the original. Of course a disc like this has no tolerance for additional degradation,

such as scratches and fingerprints, so access time will increase and it will

soon fail.

- Testing Speeds

Some error rates may be higher at faster speeds, and others will be higher

at lower speeds. In general, error rates on good discs will be about the same

at higher speeds as at 1X. Small errors such as E11 & E21 will not be affected

much. Burst errors, on the other hand, will be greatly affected. Most burst

errors (E22 & E32) are caused by disturbances to the servo systems, rather

than missing data. This effect is greatly magnified at high speed.

Error on a disc is not a physical thing. It is a manifestation of how well

the total system (disc + player) is working. The disc itself does not have an

error rate; playing the disc produces errors. Ideally, what you want is a disc

that will play back on ALL players with a low error rate. Unfortunately, there

are no standards for players, only for the discs. Therefore, each type of player

will give different results.

Most testing equipment manufacturers believe that "…1X testing: This

is still the best way to isolate the disc characteristics from the player influences.

When we test discs at 1X, we can judge the disc, rather than the player…"

- Limits of Errors

The Data Channel Error rate must be as lower as possible.

The "Red Book" specification (IEC 908) calls for a maximum BLER of

220 per second averaged over ten seconds. The CD block rate is 7350 blocks per

second; hence 220 BLER errors per second shows that 3% of frames contains a

defect.

Discs with higher BLER are likely to produce uncorrectable errors. Nowadays,

the best discs have average BLER below 10. A low BLER shows that the system

as a whole is performing well, and the pit geometry is good. BLER only tells

you how many errors were generated per second; it doesn't tell you anything

about the severity of those errors. Therefore, it is important to look at all

the different types of errors generated. Just because a disc has a low BLER,

doesn't mean the disc is good.

For instance, it is quite possible for a disc to have a low BLER, but have

many uncorrectable errors due to local defects. The smaller errors that are

correctable in the C1 decoder are considered random errors. Larger errors like

E22 and E32 are considered burst errors and are generally caused by local defects.

As you might imagine, the sequence E11, E21, E31, E12, E22, E32 represents errors

of increasing severity.

A Burst Error is defined as seven consecutive blocks in which the C1 decoding

stage has detected an error. This usually indicates a larger scale defect on

the disc, such as a scratch. In general, new discs which have not been handled

on the read surface should not exhibit any burst errors. A Burst Error constitutes

a disc failure. That's why many testing equipment offers a "Digital Error

Mapping" for quick viewing C1, C2 and CU errors:

11. EFM - Page 1

Writing Quality

- Page 11

EFM - Page 1

The data stream must undergo CIRC error correction and EFM modulation to reduce

the possibilities of creating an error.

- Whats is EFM?

EFM (Eight to Fourteen Modulation) is a method of encoding

source data for CD formats into a form that is easy to master, replicate and

playback reliably. EFM modulation gives the bit stream specific patterns of

1s and 0s, thus defining the lengths of pits and lands. EFM permits a high number

of channel bit transitions for arbitrary pit and land lengths. The merging bits

ensure that pit & land lengths are not less than 3 and no more than 11 channel

bits. This reduces the effect of jitter, distortions on the error rate, increases

data density and helps facilitate control of the spindle motor speed.

- How EFM is performed?

Block of 8 data bits are translated into blocks of 14 channel

bits. The 8-bit symbols required 2^8=256 unique patterns, and of the possible

2^14=16,384 patterns in the 14-bit system, 267 meet the pattern requirements;

therefore, 256 are used and 11 discarded.

Blocks of 14 channel bits are linked by the three merging bits

to maintain the proper run length between words, as well as suppress dc content,

and aid clock synchronization. The digital sum value (DSV) is used to monitor

the accumulating dc offset. The ratio of bits before and after modulation is

8:17.

The resulting channel stream produces pits and lands that are

at least two but no more than ten successive 0s long. 3T, 4T…11T where

T is one channel bit period. The pit / land lengths vary from 0.833 to 3.054

µm at a track velocity of 1.2 m/s, and from 0.972 to 3.56 µm at

a track velocity of 1.4 m/s.

- Why using 14-bit system?

Using 14-bit symbols allows up to 2^14, or 16384, 14-bit combinations. This

provides bit patterns that have a low number of transitions between 0 and 1.

Using 8-bit symbols would require too many pits due to the large number of transitions.

The 14-bit symbols used in EFM are taken from lookup tables, which are defined

by the Red Book specifications. However, only patterns in which more than two

but less than ten 0s appear continuously are used. If the size of the data gets

17/8 times bigger, the number of bits per pit is at least 3 times bigger. Thus

at the end, more data can be recorded on the CD in spite of the 17 bits used

instead of 8.

Below are some examples of 8-bit data symbols and their 14-bit equivalent.

Once the 14-bit symbols are put together, it is possible that the bits between

the two symbols will create an illegal bit pattern. For example, joining the

last and first bit patterns in the examples above would create an illegal bit

pattern since a minimum of two 0s must be recorded continuously.

In such cases, Merge Bits must be used between the two 14-bit symbols in order

to satisfy the specifications. The following picture shows a bit pattern and

the pits that it would produce. The areas between the pits are called "lands".

If the source binary data were recorded without encoding in this way, the disc

would frequently need to represent a single '1' or '0' requiring mastering and

replication to reproduce very small artifacts on the disc. EFM encoding ensures

that the smallest artifacts on the disc is three units long and the average

artifacts is seven units long.

Within the EFM lookup table, it is possible for the 14 bit code to start or

end with a '1'. In order to prevent a situation in which one 14 bit code ends

and the next one both start with a '1', three merging '0' bits are added between

each 14 bit code. So in reality, EFM is eight-to-seventeen modulation.

12. EFM - Page 2

Writing Quality - Page 12

EFM - Page 2

- RF Signal

The RF signal from the disc contains all data, and is also used to maintain

proper CLV rotation velocity of the disc. 3T describes a 720 KHz signal, and

11T describes a 196 KHz signal at 1.2 m/s. T refers to the period of 1 channel

bit which is 464 ns. A collection of EFM waveforms is called the "eye pattern".

Whenever a player is tracking data, the quality of the signal can be observed

from the pattern.

Below we can see a picture of showing the various stages from the original

PCM signal up the eye pattern. The modulated EFM signal is read from the disc

as a RF signal. The RF signal can be monitored through an eye pattern by simultaneously

displaying successive waveform transitions.

- Demodulation

During the demodulation, the "eye pattern" from disc is converted

to NRZI (Non Return to Zero Inverted) data coding, then is converted to NRZ

then to EFM data and finally to the Audio data. Following demodulation, data

is sent to a Cross-Interleave Reed-Solomon code circuit for error detection

and correction.

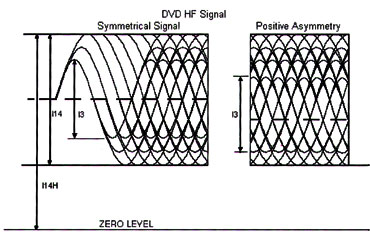

Eye Pattern

The eight-to-fourteen (EFM) modulation scheme used produces just nine different

possible lengths of pits and lands. Therefore, the resulting HF consists of

square waves of nine different durations. The signal appears sinusoidal on the

'scope because of the limited frequency response of the optics. It is common

to display the photo detector output on a scope with a conventional trigger.

This results in a display where the nine possible frequencies (3T to 11T)

all add up on top of each other. This type of display is termed an "eye

pattern" and provides valuable information about the various alignment

parameters of the CD player. Notice that the relationship between size and wavelength

is very distinct in the eye pattern.

The RF output is converted to a square wave, and then phase locks a clock

with the period T. Each of the nine pit/land lengths are exact multiples of

one fundamental length, called 1T.

The T represents the data clock period, which is 231 nanoseconds at 1X read

speed. The particular run length is referenced by nT where n is an integer multiple

of the clock period. The nine possible lengths of pits and lands are 3T, 4T,

5T, 6T, 7T, 8T, 9T, 10T, and 11T. The waveforms generated by these pits &

lands are called I3, I4, I5, I6, I7, I8, I9, I10 and I11 respectively. I3 represents

the shortest pit / land, and I11 represents the longest pit or land.

13. Jitter - Page 1

Writing Quality

- Page 13

Jitter - Page 1

- Introduction

Along the 5km of track in a typical CD, there are bright and dark marks ranging

in size from about 0.8mm to 3.1im in length. Without any defects or noise, these

sequences of bright and dark marks contain all the information necessary to

play back the original recording.

To read these sequences, a laser is focused on to the track and a photo detector

(PD) measures the amount of light reflected back from the track. The RF signal

from the PD represents the reflectivity of a small region of track. An example

of the marks in the track and the RF signal are shown in picture below. To determine

the pattern of marks on the discs, a clock is generated off the data and a threshold

is set for the RF signal.

If the RF signal crosses the threshold, a "1" is entered for that

time bin, otherwise a zero is entered. This binary bit stream represents the

"EFM" code, the first of the three primary codes used in the CD system

(EFM, C1, and C2). If the RF signal contains noise, the time of the threshold

crossing can be too early or too late. The average amount of time there threshold

crossings move around with respect the time bin is called "Jitter".

If the "Jitter" is large enough, the threshold crossings will be

assigned to incorrect time bins and result in errors introduced into the EFM

bit stream. While the next two codes in the CD system protect against these

types of errors, it is possible for jitter to appear at your speaks as distorted

audio if these errors go uncorrected.

- History Of Jitter

Jitter first appeared as a required measurement for optical disc in the Orange

book. Subsequently it was amended to the Red Book. The Red Book stipulated that

the measurements specified must be performed on a single beam drive. This relates

to the method of tracking and was the early standard for consumer players. Early

tester equipment was based on the Philips CDM1 or CDM4 mechanisms, both single

beam. However, by the time the jitter amendment to the Red Book was ready, most

drives were triple beam.

Research revealed that there was low correlation between single and triple

beam measurements of jitter. The standard for jitter measurement became another

Philips drive, the CDM12, which was triple beam.

Data to data jitter had a drive dependency due to the method of measuring pit

and land lengths in time. A drive contributed variations from its electronics

and optics. This was one of the main reasons that drive selection was important

to CD test equipment manufacturers: they had to try to constrain the range of

variation by selecting the drives they used. When a consumer drive looks at

a disc its job is to get the best out of that disc regardless of what the disc

is like. Hence optimising focus, tracking or slicing is all part of the strategy.

14. Jitter - Page 2

Writing Quality - Page 14

Jitter - Page 2

- What causes Jitter?

There are three possible causes of Jitter:

a) The recorded pits are not perfectly accurate in terms of size. The cause

of that are the laser noise and the recording strategy of the recorder. Even

if the pits were perfectly recorded and replicated, there would still be jitter.

This is because of the limited resolution of the pickup in the player.

b) There is the influence of other pits nearby in the same track. The readout

spot is broad enough that when the centre of the spot reaches the beginning

of a short pit, the end of the pit lies within the fringes of the spot. So the

apparent position of the one pit end is slightly dependent on where the other

end is. The same applies to short lands. This is called inter-symbol interference.

The jitter which arises from this is not truly random, but is associated with

the pattern of recorded pit and land lengths.

c) There is crosstalk between pits in adjacent tracks, because the readout

spot does not fall wholly on one track. It is a largely random contribution.

It is worse at lower recorded velocities, because the highest frequency components

of the readout signal in the wanted track, with which the crosstalk is competing,

are weaker.

- Why Measure Jitter?

Jitter is important because the CD information is carried in the edges of the

pits. A transition between pit and land (either pit to land or land to pit)

is a one, and everything else is a zero. Since the data is self-clocked at a

constant rate, if the edges of the pits are not in the correct places, errors

will be generated.

If a transition between pit and land comes as little as 115 ns early or late,

an error will result. A standard deviation of less than 35 ns will result in

only about 1% probability of the effect lengths varying by more than 115 ns.

In addition, any types of disc defects will affect the jitter, making this a

sensitive test of disc quality. Pit distortion, crosstalk from adjacent tracks,

intersymbol interference, pit wall steepness, low signal-to-noise ratio, and

LBR (or CD-R writer) instability can all cause jitter on the disc.

15. Jitter - Page 3

Writing Quality

- Page 4

Jitter - Page 3

- Measuring Jitter

Pit geometry and Jitter can be measured by looking at the HF signal coming

from the pickup. The HF (High Frequency) signal coming from the pickup represents

the light intensity of the beam reflected back from the disc surface. A higher

voltage represents greater light intensity, and a lower voltage represents less

light. The signal is rapidly changing between light and dark as the beam passes

over the pits. When the beam is over a pit, the light intensity is reduced.

When the beam is between pits (over "land"), the light intensity is

higher. Data is encoded in the transitions between pits and lands.

The waveform shown below shows "Jitter" as regenerated from an optical

disk. "Jitter" values and limits are specified in CD-ROM and DVD published

industry standards. Jitter is made up primarily of two phenomena. One is the

measurement of the time difference between the data and clock and the other

is the measurement of pulse width (pulse cycle measurement for Magnetic Optical

drives). The pulse width measurement is used to evaluate disk media.

Because the oscilloscope is triggered by the signal itself, we can see the

jitter in the different pit lengths (such as 3T, 4T…11T) separately. The

other thing we can see is the "deviation" for each pit length. This

is a measure of how different a given pit length appears to be from what it

should be. So, for example, the usual tendency is for the shortest pits (the

3T pits) to appear a little shorter than 3T. Jitter and deviation are two aspects

of the same thing: jitter is the random variation, and deviation is the average

error, in the apparent length.

16. Oscilloscope

Writing Quality

- Page 15

Oscilloscope

Generally, HF measurements are easier to make on an analog oscilloscope. This

is because an analog 'scope shows a multitude of sweeps superimposed, whereas

a digital 'scope shows only a "snapshot" of one brief sample. The

"Eye Pattern" is what you see on an analog scope. You cannot see this

eye pattern on a digital scope. Instead, you see just a sampling of a few pits

and lands. A digital 'scope is good for close inspection of individual pits,

but what we are interested in is the average values over many pits.

Jitter is a statistical measurement of the variation in pit or land length

around the mean value for each run length. For each pit and land length a large

number of lengths are measured and the standard deviation is reported.

If the standard deviation is high (greater than 35ns) this can cause instability

in the clock and data decoding circuitry on target players and hence reduce

the readability of the disc. Once again this parameter is more important when

considering CD-ROM discs as these discs are likely to be read in high-speed

players.

17. Jitter at DVD

Writing Quality

- Page 17

Jitter at DVD

For the CD format Philips maintains industry's Reference Standard measurement

system, "RODAN," or "Read-Only Disc Analyzer." RODAN is

kept in Paris. In the DVD industry, there has been no appointment of a "DVD

Paris Meter." Instead, a collection, or sub-group, of fundamentally standard

Pulstec DDU-1000 analyzers has been used to produce the multi-point calibration

disc sets whose values are based on the sub-group averages for the key parameters.

Both approaches, if executed properly, can be effective. Most testing equipment

vendors using Pulstec SDP-1000 drive for DVD Jitter measurements.

The DVD specification includes a standard for jitter, which is not found in

the CD specification. The logical format specifies disc mechanical and optical

characteristics, but specifications for sources of degradation, like inter-symbol

interference introduced in the disc cutting step, are not included in the current

CD specification.

There are also items which cannot be expressed by parameters listed in the

current CD specification, such as degradation introduced by the mastering machine

or unevenness in pit replication. These effects can't be ignored in DVDs with

their higher recording density, and thus it is necessary to specify such factors.

There was an error rate specification in the CD specification, but this is impossible

to measure unless there are defects or degradation due to the playback device.

The disc specification for Pioneer's Karaoke System specified an error rate

using a tilted pickup, but this was a difficult measurement to make, and certainly

not efficient. In the DVD specification it was decided to add a jitter specification,

as jitter is a parameter where degradation can be measured numerically. Jitter

is measured in the absence of tilt, which is an ideal disc specification, but

it isn't practical to compensate for tilt at all points. Since the effect of

jitter due to the varying component of surface tilt is small, it was decided

to measure across one full revolution and measures the average value of tilt

variation. As a result, it is only necessary to compensate for the average radial

tilt when taking measurements.

18. Technologies for Reducing Jitter

Writing Quality

- Page 18

Technologies for Reducing Jitter

Because of the effects of jitter can make it to your speakers, there are growing

number of jitter reduction technologies of the creation of recordable CD audio

discs including Yamaha's "Audio Master Quality Recording" and Plextor's

"VariRec" for CD recordable discs, as well as JVC's "K2 Laser

Beam Recorder" for mastering CD-ROMS.

Yamaha's Audio Master Quality Recording technology increases the window margin

by lengthening the EFM marks along the track. While the actual jitter of the

RF threshold crossings as measured in nanoseconds does not change, lengthening

the EFM marks has the effect of lowering the relative jitter as measured as

a percent of the channel clock period. The reduction of relative jitter by this

technique results in a lower capacity disc (63mins vs. 74mins.), but reduces

the need for activation of the error correction concealment circuitry and ultimately

results in less audio distortion.

Similar EFM mark lengthening and associated relative jitter reduction can be

obtained using a disc such as Kodak's 63minute Photo-CD (perhaps without also

giving up recording speed, multiple sessions and "SafeBurn". There

63minute discs are made by increasing the physical length of one cycle of the

wobble (the wobbled track provides the reference for spindle speed control).

This lower relative wobbles frequency results in the drive spinning the disc

faster which makes the marks longer.

19. JVC ENC K2

Writing Quality

- Page 19

JVC "ENC K2"

The following information is taken directly from JVC's website. Since this

technology is used only in pressed discs is presented as was originally posted.

For more more information visit JVC's

Japanese website.

Victor

Company of Japan, Limited (JVC) and Victor Entertainment, Inc., at 6th of November

2002 announced the co-development of "Encode K2" (ENC K2) technology

that according to JVC brings dramatic improvements in sound quality to formats

such as CD and DVD Audio during the manufacturing process. The development of

ENC K2 has been made possible by the application of JVC's proprietary "K2

Technology" during the CD format encoding process.

Victor

Company of Japan, Limited (JVC) and Victor Entertainment, Inc., at 6th of November

2002 announced the co-development of "Encode K2" (ENC K2) technology

that according to JVC brings dramatic improvements in sound quality to formats

such as CD and DVD Audio during the manufacturing process. The development of

ENC K2 has been made possible by the application of JVC's proprietary "K2

Technology" during the CD format encoding process.

JVC will employ the technology on CCCDs to be released on November 13, 2002,

and display the logo marks on the left as a guarantee of a musical presence

virtually identical to that of the original master tapes (http://www.jvcmusic.co.jp/cccd).

In the quest to convey "the truth of the music" accurately, the two

companies have worked together for years to develop technologies that improve

the sound quality of disc media, aiming to achieve faithful reproduction on

CD of the musical quality of original master tapes.

Based on conventional K2 Technology, ENC K2 reproduces a musical presence virtually

identical to that achieved on the original master tapes. ENC K2 technology eliminates

any artifacts that might alter or degrade sound quality from the digital signal

transmission and occur during the CD format encoding process, such as distortion

and noise.

- Background information

Theoretically, the quality of digital audio should not change as long as codes

remain constant. In fact, however, it is modified during digital signal transmission.

The CD cutting process used when manufacturing CDs usually includes three processes:

"Reproduction of Master Tapes," "CD Format Encoding Process,"

and "Laser Cutting Process." Most recently, PC software and copy control

functions have been introduced as a part of the "CD format encoding"

process.

ENC K2 was developed to eliminate the artifacts that alter sound quality from

digital signal transmission from input to output during the "CD format

encoding" process; a process which is becoming increasingly multi-functional.

(See Chart 1 on the next pages.)

The two companies have jointly developed and introduced technologies to improve

music quality based on their proprietary K2 technology, including "Digital

K2" which eradicates alterations in the audio quality of master tapes and

"K2 Laser Cutting," (K2 LC) which improves the precision and purity

of laser cutting during the CD cutting process.

With the development of ENC K2, the companies have established the Full Code

Transfer System at all processes during the cutting process in CD manufacturing.

(See Chart 2 on the next pages.)

- "K2 Technology" and "ENC K2"

Theoretically, the quality of digital audio should not change as long as codes

remain constant. In fact, however, it is modified during digital signal transmission.

This occurs due non-code components, such as "ripple" and "jitter,"

which exist in digital signals. "K2 Interface," the first version

of the two companies' proprietary K2 technology, was developed in 1987. This

technology instantly reads only "1" and "0," logical codes

from all digital signals including those that change the music quality, then

recreates new digital signals. With this technology, the companies succeeded

in eliminating any quality modifying factors from digital signal transmission.

JVC has successfully inserted "ENC K2" in the CD format encoding process

by adapting "K2 Technology" to EFM and controlling data synchronization.

The companies realized the "Master Direct" concept, in which every

CD faithfully reproduces the music quality of master tapes, by establishing

the "Full Code Transfer System." This system removes the factors that

alter music quality during the three most important CD cutting processes: "Reproduction

of Master Tapes," "CD Format Encoding Process," and "Laser

Cutting Process."

20. AudioMASTER

Writing Quality - Page 20

AudioMASTER

The theory behind this entire "new" recording mode is relatively

simple. According to the original red-book standard, the linear velocity of

the laser beam, at 1x reading speed, over the CD's surface is allowed to vary

between 1.2 and 1.4 meters per second. Anything between these extreme values

is acceptable and all CD players, even the most archaic ones, are expected to

be compliant when a disk is recorded at such a speed. This speed might even

be non-constant for a single disk, as long as its variation is limited within

certain bounds prescribed by the above standard.

As some of the older among this audience might remember the first recordable

disks where of a lower capacity, in the area of 63 minutes. Remember this, as

we will move on to our technical explanation below.

Consequently, when recording takes place at a higher linear speed, the length

of the pits is proportionally greater. In particular, at 1.4m/s, this length

is about 15% greater than the length of the same pit at 1.2m/s.

In real life, the pit length depends on several factors and matches the theoretical

figure within a certain amount of uncertainty (error). During a disk reproduction,

this type of errors is reported as "jitter". In "digital"

terms, these errors are reported as C1/C2 errors.

Using a photo detector on the analog signal that passes through the laser diode

on the pickup mechanism of a drive, increased jitter is seen as more "blurring"

in the following pictures taken from a Yamaha white paper:

|

Audio Master Quality Recording

|

Conventional Recording

|

|

|

|

It has been found by laboratory experiments that this uncertainty is mostly

irrelevant to the pit length. So a 15% longer pit contains about 15% less pit-length

uncertainty! This is the crucial point in all of our discussion here. That is:

a 15% recording/reading speed increase results to about 15% less errors in the

pit-length.

A somewhat simplistic argument might suggest an analogous decrease in jitter.

In fact, the Yamaha marketing department seems to take into account the uncertainty

on both sides of a pit and claims (an erroneous according to our opinion) 30%

jitter decrease when recording at a 15% increased linear speed. Overall, this

new Yamaha "discovery" seems to make use of an old recording mode

that was presumably in use several years ago during the initial market introduction

of commercial recorders.

21. VariREC

Writing Quality

- Page 20

VariRec

comes

from the "Variable Recording" words and allows the change of the laser

power when writing CD-DA or CD-R at 4X. (TAO or DAO). Users are allowed to make

slight adjustments to the default value (0). This will change the quality of

the sound and will also increase the playability or compatibility (or in extreme

cases in-compatibility) with the existing players. Plextor says that the default

setting (0) already reflects to the optimized laser power with the lowest jitter.

comes

from the "Variable Recording" words and allows the change of the laser

power when writing CD-DA or CD-R at 4X. (TAO or DAO). Users are allowed to make

slight adjustments to the default value (0). This will change the quality of

the sound and will also increase the playability or compatibility (or in extreme

cases in-compatibility) with the existing players. Plextor says that the default

setting (0) already reflects to the optimized laser power with the lowest jitter.

VariRec can change the value of the laser power. The resulting effects are:

- change of sound quality of recorded disc (there should be a slight change,

some kind of effect when VariRec is used on the different settings)

- change of playability or compatibility with Home CD Players or Car CD Players

(some players need a slightly higher or lower laser power)

Most audio professionals have a personal preference for higher/lower laser

power (some even say they can hear the difference between a recording at high

speed and at 1X). When VariRec is used to the most extreme settings, there is

a chance that the playback device cannot read the disc properly. In this case,

use the default setting or switch VariRec off.

Users may compare Plextor's "VariRec" towards Yamaha's "AudioMASTER"

system. We asked Plextor the same question and the answer was that "...VariRec

is not an answer to any technology of any other manufacturer. The write quality

of Plextor recorders is already much better, several tests have proven this.

However, the idea that VariRec is more an option to 'tune according to personal

taste'..." doesn't seem to un-veil the whole truth. Both "AudioMASTER"

and "VariRec" uses 4x-recording speed, and both technologies promise

a reduction of jitter and better AudioCDs.

A good question here is whether if we can listen to such slight changes of

the sound, since the sound digital signal is processed through many DA/AD processes

and circuits before the analogue audio playback. Some people claim that they

can hear to such changes, probably with high-end systems…The addition of

such specialized technologies for AudioCD recording is reasonable for user who

may needed them. I think most of you have forgotten how many minutes it takes

to write a full CD at 4x ;-)

22. TEAC Boost Function

Writing Quality

- Page 21

TEAC's Boost

function

TEAC offers a similar technology to VariRec that "boosts" the laser

power in order to reduce Jitter. As TEAC explains "…The write state

will be changed by varying the laser power and its radiation duration of the

drive. As a result, the sound quality or read performance may fluctuate depending

on the congeniality of the media and the drive. The change may work better or

worse. It is impossible for TEAC to make verification as it depends on the congeniality

of the media and the drive. Changing the settings is your own responsibility…"

The CD-W540E tuning tool offers that option, under the "CD-R(4X) Strategy"

function. These settings function only for the CD-R media and only when the

write setting is 4X speed inside the CD-R software. Below are explained the

two 4X writing modes:

- Normal: Default settings adjusted by TEAC

- Boost: The media is written with stronger laser emission in a lesser time

than those of the normal settings.

* Normal is selected in the default settings.

* Note in advance that some effects may not be noticeable or a side effect such

as increasing errors may result when Boost is selected.

23. Testing Equipement - Page 1

Writing Quality

- Page 23

Testing Equipement - Page 1

The official testing equipment that sold can be divided in two major categories.

Normal modified drives

The low end testing equipment is using either a CD-ROM or a CD-R/RW drive.

The most used CD-ROM comes from Plextor (PX-40TS). This kind of equipment only

can measure recorded CDs, while testing equipment based upon CD-RW drives can

measure also blank CDs. Some manufacturers are using Plextor's PX-W1210S or

TEAC CD-W512S CD-RW drives. The modification is not exactly known (and kept

secret for obvious reasons); probably an extra circuit is added to intercept

signals from the optical drive (E11, E21, E31, E12, E22, and E32).

Each manufacturer has developed software that calculates the average/maximum

of BLER, C1, C2, Jitter and other measurements and draws graphs of each measurement:

High-end drives

High-end solutions are custom build-in drives with special components. This

ensures maximum readability but also maximum price. Most high-end drives are

based upon Philips (and Pulstec) optical pickups. There are three types of Philips

optical pickups:

a) CDM24

b) CDM4, single-beam, linear polarized

c) CDM12 three-beam pickup

For DVD format, manufacturers seem to select either normal modified DVD±R/RW

drives or drives based upon Pulstec pickups. Currently there are drives based

upon Pioneer DVR-201 (Authoring 635nM) or Pioneer DVR-A03 (General 650nM) and

at RICOH DVD+RW5125A (for the DVD+R/+RW format).

Especially for Jitter measurements, the optical response of the pickup must

be up to a uniform standard. The Red Book does specify the optical characteristics

(wavelength, numerical aperture, the illumination of the aperture, polarisation,

and wavefront quality) of the pickup for jitter measuring purposes - although

the polarisation specified is different from the one used for other Red Book

measurements! - But it does not say anything about ordinary players.

Measuring systems do in practice use the same types of pickup as domestic players,

but some care must be taken in choosing them, or the results could be pessimistic.

Also it must be remembered that when we test discs against the given specification,

we are really supposed to be testing the jitter attributable to the disc alone,

after eliminating any contributions due to imperfections of the players.

24. Testing Equipement - Page 2

Writing Quality

- Page 24

Testing Equipement - Page 2

- Categories

Testing equipment can be found for:

- CD (pressed media)

- CD-R/RW media

- CD Stamper media

- Disc Balance Checker

- DVD-ROM media

- DVD-RAM media

- DVD±R/RW media

- Manufacturers

AudioDev (http://www.audiodev.com/)

Audio Precision (http://www.audioprecision.com)

Aeco (http://www.aecogroup.com/)

CD Associates (http://www.cdassociates.com/)

Clover Systems (http://www.cloversystems.com)

Cube Tec (http://www.cube-tec.com)

DaTARIUS (http://www.datarius.com/)

Dr. Schenk (http://www.drschenk.com/)

Eclipse (http://www.eclipsedata.com/)

Pulstec (http://www.pulstec.co.jp/Epulstec/)

Reflekt Technology (http://www.reflekt.com/)

Quantized Systems (http://www.quantized.com/)

Sony Precision Technology (http://www.sonypt.com/)

Stagetech (http://www.stagetech.se/)

- Testing services

AudioDev (http://www.audiodev.com/)

MS Science (http://www.mscience.com)

Philips (http://www.licensing.philips.com)

Sony (http://www.sony.co.jp/en/Products/Verification/)

- Testing software

Eclipse Data (http://www.eclipsedata.com)

- Testing Standards

Philips has a testing methodology for measuring CDs called "CD Reference

Measuring Methods". A description of the way to measure the physical parameters

of a CD in a standardized way. Its price is $200. More information about measuring

writing quality can be found at: Philips/Sony Book Standards, Orange/Red/Yellow/Blue/White

Books, ISO/IEC 908, ISO/IEC 10149, and ECMA 130

25. Calibration media

Writing Quality

- Page 25

Calibration media

In order to ensure perfect performance and reliable test results, test players

have to be regularly calibrated. Over time players get less accurate because

of several influences and effects on electronics and other components.

Some examples:

- Ageing of parts like laser diodes and mechanical components (swing arm,

sled)

- Distorting of optical pick-up head (influencing spot size and shape and

orientation of wave front)

- Malfunctioning of components like pick-up head due to contamination and

temperature changes

- Drifting of servo electronics

Philips test disc SBC444A

This disc has built-in defects that can be used for checking error rates. In

addition to providing known errors, it tests the player under maximum stress.

On disc SBC 444A, certain results may not be perfectly repeatable because the

disc stresses the player to its limits. For instance, the number of E32 errors

generated by the Black Spots in particular may vary. This is because these errors

are "soft errors." That is, they are caused by disturbance to the

player's servo systems, rather than loss of data. Each time the disc is played,

the disturbance is slightly different, and the results cannot be predicted.

Disc SBC 444A provides two kinds of defects: Missing information, and black

spots. The tracks with missing information should provide fairly repeatable

results since these errors are encoded into the data. The sections with Black

Spots have the information in tact, but obscured by the black spots. In this

case, not only is there information lost, but the servomechanisms are stressed.

For example, when the readout beam encounters the black spot, focus, track following,

and clock recovery servo signals disappear. After the beam has passed the black

spot and the signal is restored, the pickup is out of focus, off track, and

the bit clock is at the wrong frequency. This causes many additional errors

to be generated in an unpredictable way.

Test Signal Disc 5B.3

The HF Test Signal Disc 5B3 is a calibration disc designed for CD disc and

player manufacturers and manufacturers of CD test equipment. The disc costs

$100.

Multi-point Calibration CD Disc set

The discs are intended to be used for calibration of CD test equipment. The

Multi-point Calibration CD Set contains three (3) discs:

Disc 1: Predefined BLER, HF measurements.

Disc 2: Predefined radial noise, predefined radial acceleration.

Disc 3: Predefined jitter and effect length.

This three disc Philips Multi-Point Calibration Set is available from Philips

Consumer Electronics, Co-ordination Office Optical and Magnetic Media Systems,

Building SWA-112, PO Box 80002, 5600 JB Eindhoven, The Netherlands (fax +31

40 2732113). Cost of the complete set is US$750, Disc 1 only is available for

US$275, Disc 2 costs US$325, and the price of Disc 3 is US$300, plus shipping

charges. Each disc contains multiple test points recorded on a dedicated track.

Tracks are separated by gaps.

- Disc 1, RCD-BH.1, contains multi-point tracks for BLER, E32, burst error

length, I11/Itop, I3/Itop, Itop, push pull, cross talk, radial contrast, and

asymmetry. Stable errors are created by irregular pit patterns.

- Disc 2, RCD-RA.1, provides multi-point radial noise and radial acceleration

values that are generated by deviated tracks.

- Disc 3, RCD-JT.1, supports multi-point correlation for jitter and effect

length (length deviation) that is controlled by modulated pit and land patterns.

26. Tests before recording

Writing Quality

- Page 26

Tests before recording

Not all testing equipment can measure un-written blank media. In general there

are three types of tests. For detailed explanation visit Quantized (http://www.quantized.com).

Pre-Groove Integrity Tests

- Wobble Amplitude

- Normalized Wobble Amplitude

- Wobble Carrier To Noise Ratio (Wobble CNR)

- Locking Frequency Of Wobble (FWOB)

- Radial Contrast Before Recording (RCb)

Blank CD-R Servo Parameters

Blank CD-R HF Tests Parameters

- Intensity of Land Signal (ILAND)

- Intensity Of Groove Signal (IGROOVE)

- Radial Noise (RN)

Below are listed the initial and the explanation of various measurement tests

for blank CD-R/RW media:

- Igb: Signal Level of Groove

- Ilb: Signal Level of Land

- RCb: Radial Contrast

- TEb: Push Pull Amplitude

- Push-Pull b: 0.1µm Offset Push-Pull

- RNb: Radial Noise of Push-Pull

- WNRb: Wobble CNR

- Iwp-p: Wobble Amplitude

- NWS: Normalised Wobble Signal

- Rgb: Reflectivity of Groove

- Rlb: Reflectivity of Land

- ATER: ATIP error rate

- ATBEL: Maximum ATIP Errors

- ATIP Jitter

27. Tests after recording

Writing Quality

- Page 27

Tests after recording

In general there are three types of tests. For detailed explanation visit Quantized

(http://www.quantized.com).

Data Channel tests

Those tests are concerned with the integrity of the decoded data from the disc

in terms of the amount of and severity of errors on the disc. This is a good

overall indication of disc quality, however, when there are underlying problems

causing high error rate the root of the problem can be found by looking at other

tests. In that category there is only the BLER measurement.

Servo tests

Those tests are generally indicating the trackability of the target disc. This

indicates whether there are any problems with the overall track geometry which

are likely to cause playability problems. In that category we can find the following

measurements:

- Push Pull

- Radial Noise

- Radial Acceleration

High Frequency tests

Those tests examine the read signals form the test player laser pickup. The

nature of these signals indicates the overall pit structure on the disc is usually

a good indicator of disc playability. In that category we can find the following

measurements:

- Reflectivity

- I3/Itop

- I11/ITOP

- Asymmetry

- Beta

- CrossTalk

- Radial Contrast

- EccentricityJitter

Below are listed the initial and the explanation of various measurement tests

for written CD-R/RW media. Note that not all measurements are described in the

CD specifications. Several testing equipment manufacturers, using the standard

standards, created new measurements that describe in a better way, according

to them, the condition of a written disc:

- Iga: Signal Level of Groove

- Ila: Signal Level of Land

- RCa: Radial Contrast

- TEa: Push Pull Amplitude

- Push-Pull a: 0.1µm Offset Push-Pull

- RNa: Radial Noise of Push-Pull

- WNRa: Wobble CNR

- Cross Talk

- Itop

- I3/Itop

- I11/Itop

- I3/I11

- Asymmetry

- Beta (?)

- NPPR: Normalised Push-Pull Ratio

- Rtop: Reflectivity from Itop

- Rga: Reflectivity of Groove

- Rla: Reflectivity of Land

- C1: Error rate/sec

- C2: Error rate/sec

- CU: Total Number of CU Errors

- Jitter

- ATER: ATIP Error rate

- ATBEL: Maximum ATIP Errors

- ATIP Jitter

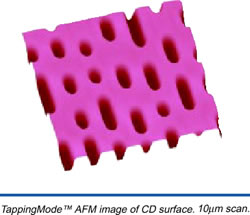

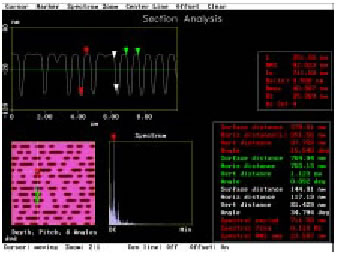

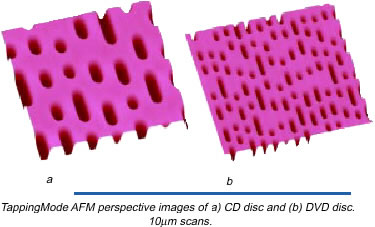

28. Atomic Force Microscopy

Writing Quality

- Page 28

Atomic Force Microscopy

Despite the fact that the industry checks the writing quality of stampers with

the use of CD/DVD analyzers, there are alternative ways with the use of Atomic

Force Microscopy! This kind of technology is mostly used not for written media,

but mostly during the development of dye layer or for testing the stamper quality.

Pit fidelity is a determining factor for the playability of a disc and the

error rate of the disc. The final CDs produced for sale are not free of defects.

Defects in the pit surface may be caused by mastering errors, stamper defects,

and manufacturing (molding) defects. An elaborate error correction scheme with

data redundancy is utilized to allow the compact disc to offer robust performance

in the presence of some errors. Atomic Force Microscopy (AFM) is a tool for

manufacturers to identify defects and their causes on both the stamper and disc

surfaces.

Optical disc manufacturers continue to push for faster cycle times to increase

capacity while maintaining disc quality. This creates the need for improved

methods to analyze the quality of the disc and stamper surfaces. AFMs are ideally

suited to the characterization of nanometer sized pit and bump structures in

CD and DVD manufacturing.

AFM provides quantitative, three dimensional imaging of the disc or stamper

surface within minutes. Similar quantitative information is possible using SEM

or TEM, but these techniques are destructive, time consuming, measure in only

two dimensions, and provide a limited field of view. Another major advantage

of AFM over other techniques is that once an image is captured, cross sections

can be obtained in seconds to provide pit depth, pit width, pit side-wall angle,

and track pitch anywhere in the data set - and without physically damaging the

disc.

For pit characterization with AFM, the discs may be examined after molding

and before metallization. Representative three-dimensional AFM images of CD

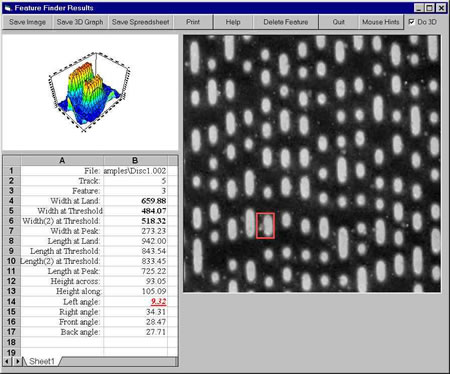

and DVD stamped discs (replicas) are shown below:

Both images were obtained at the same lateral magnification; the increased

pit density and reduced track pitch for DVD are readily apparent. The types

of measurements which AFM can provide for CD and DVD characterization are listed

in the below table:

|

Pits (disc)

|

Bumps (stamper)

|

Tracks

|

|

Depth

|

Height

|

Pitch

|

|

Width

|

Width

|

|

|

Left/right sidewall angle

|

Left/right sidewall angle

|

|

Roughness of pit floor

|

Roughness of bump surface

|

CD and DVD measurements readily accessible by AFM. Note that the position at

which the width is measured will have to be determined depending on the ability

of the tip to measure sidewall angles accurately.

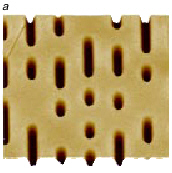

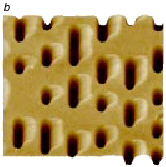

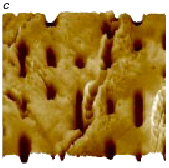

The corresponding images below show changes in the CD pit structure which accompany

both the observable (by eye) staining and BLER measurements.

In Figure a, the pit structure is uniform and replicates the stamper accurately.

In Figure b, the pits are locally deformed with polycarbonate piled up or smeared

toward the perimeter of the disk. This deformation is manifested as visible

staining and can lead to high block error rates depending on degree.

In Figure c, the polymer surface is severely distorted and some of the pits

are barely recognizable.

In the lands (surfaces surrounding pits), the polymer appears to have stuck